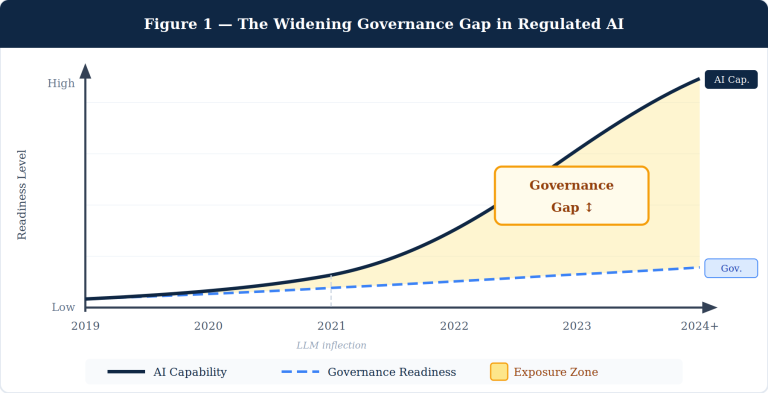

Most clinical AI deployed in hospitals today is mistimed, miscalibrated, and unmeasured. Mistimed because it speaks at the moment of order entry — when the clinician’s mind is already made up. Miscalibrated because it treats a quiet morning the same as a triage surge. Unmeasured because it almost never tracks whether its recommendation actually changed care. Override rates approaching 96% on medication alerts are not a clinician problem. They are an architecture problem.

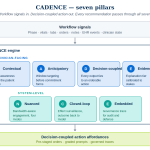

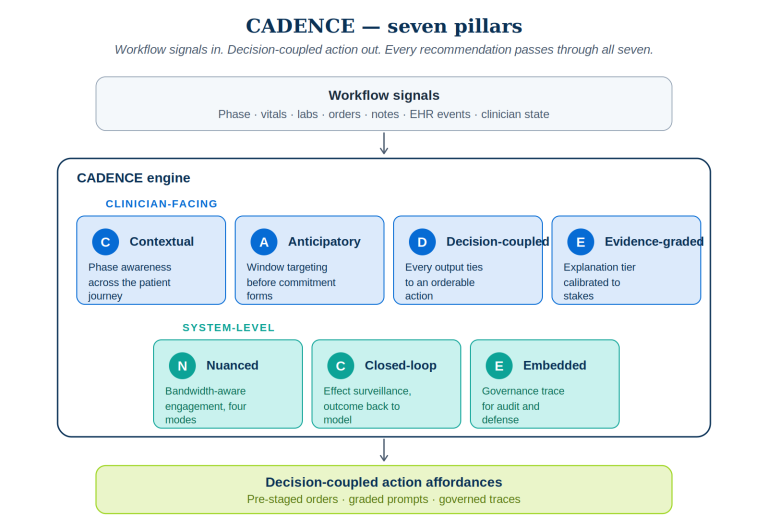

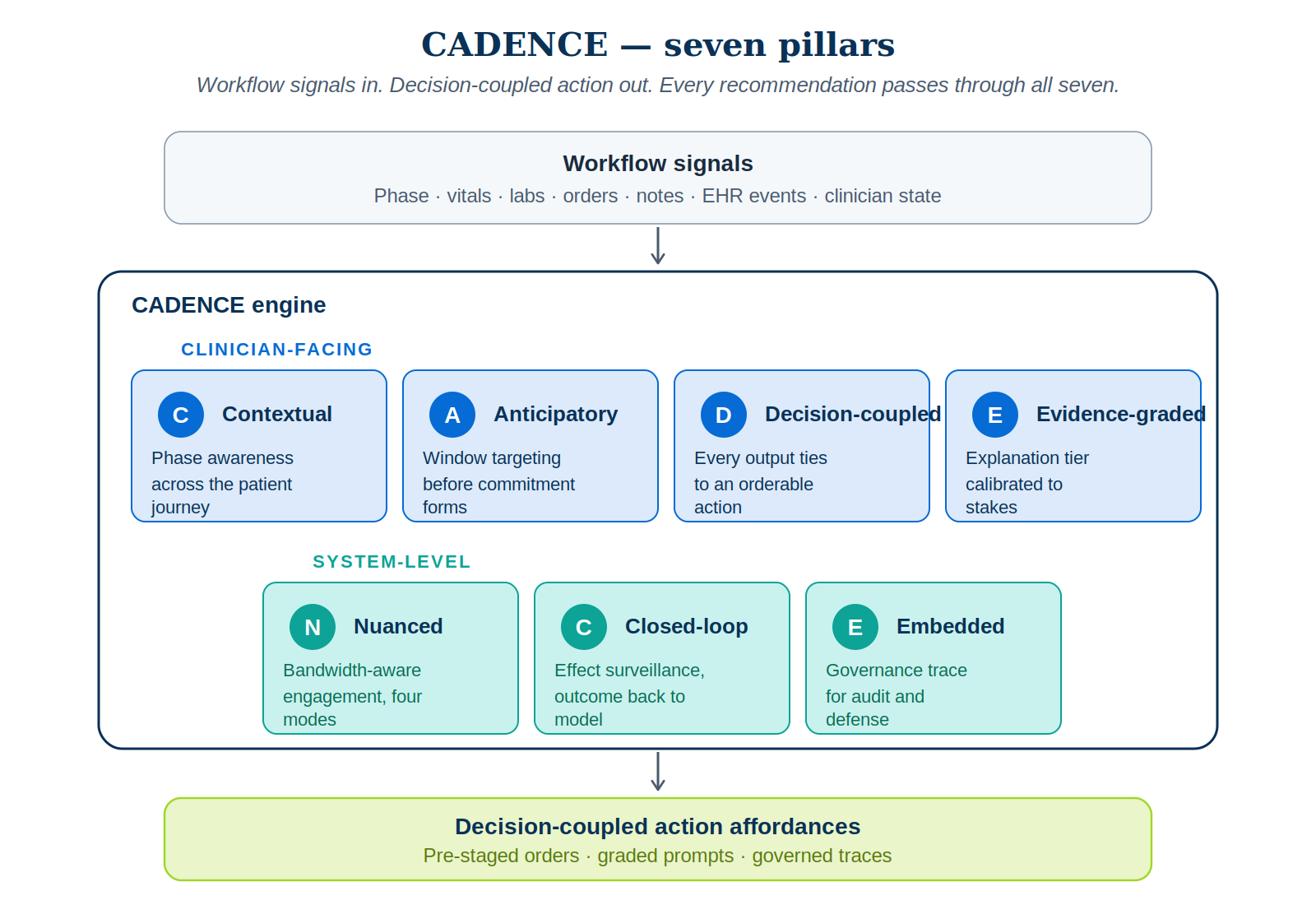

This article lays out CADENCE — a seven-pillar architecture for clinical AI that intervenes in the pre-decisional window, couples every output to a concrete action, calibrates engagement to clinician bandwidth, and closes the loop on whether the recommendation moved care. CADENCE is not a replacement for CDS Hooks, FHIR, or the Five Rights of CDS. It is the delivery discipline that sits on top of those standards and decides — for every recommendation, every time — when to speak, how loudly, with what action attached, and how to learn from what happened next.

Why most clinical AI speaks too late

The clinical AI literature is thick with models that score impressively on retrospective cohorts. The same literature is thin on production deployments that demonstrably change care. The gap between predictive accuracy and clinical impact is the central problem of contemporary clinical AI — and it is fundamentally an integration problem, not a model-quality problem.

Three numbers anchor it:

- Override rates of up to 96% on medication-related CDS alerts. Current systems generate high alert volumes with limited clinical relevance, producing the alert fatigue that compromises patient safety because clinicians stop attending to the alerts that matter.

- Sepsis prediction models with AUROCs above 0.95 routinely fail to translate into clinical routine. The peer-reviewed literature is unambiguous: workflow integration, not predictive performance, is the rate-limiting step.

- Over 1,000 FDA-authorized AI/ML medical devices by the end of 2024. Many face the same workflow-integration friction as legacy CDS, because they are added to existing alert streams without any architectural change to how the recommendation reaches the clinician.

Better models, on their own, do not produce better decisions. The integration architecture is.

The four canonical frameworks — and what they leave on the table

Four frameworks dominate the field. Each contributes essential ideas. None provides an architecture sufficient for modern AI integration.

The Five Rights of CDS (Osheroff, 2007) — right information, right person, right format, right channel, right time — is the closest analog to CADENCE. But the Five Rights is a set of principles, not an architecture. It enumerates “right time” without operationalizing it. It does not distinguish pre-decisional from at-decisional, and it offers no mechanism for graceful degradation when the clinician is overloaded.

The Ten Commandments for Effective CDS (Bates et al., 2003) was prescient about anticipating needs and monitoring impact. Twenty years later, those two commandments remain the most violated. The Ten Commandments is wisdom, not specification.

HL7 CDS Hooks is the dominant delivery standard, and an excellent one. It defines event triggers — patient-view, order-select, order-sign, encounter-start — at which an EHR calls a CDS service and renders cards in the workflow. CDS Hooks solved the integration plumbing. The structural limitation is that nearly all of its hooks are at-decisional or post-decisional. There is no pre-decisional-intent-forming hook, because intent formation is not a discrete event the EHR knows how to detect.

The Sittig & Singh eight-dimension sociotechnical model is the canonical framework for analyzing health IT safety — rigorous, widely applied, indispensable. But it is a diagnostic and reporting framework, used for understanding what went wrong or specifying what to study. It is not a delivery architecture for live AI inference in workflow.

The gap that remains is an architecture that is anticipatory rather than reactive, phase-aware across the patient journey, cognitively calibrated to the clinician’s current state, decision-coupled to actionable artifacts, closed-loop on whether it actually changed care, and governance-embedded for regulatory defensibility under the FDA’s 2026 CDS guidance and ONC’s HTI-1 transparency requirements.

That gap is what CADENCE fills.

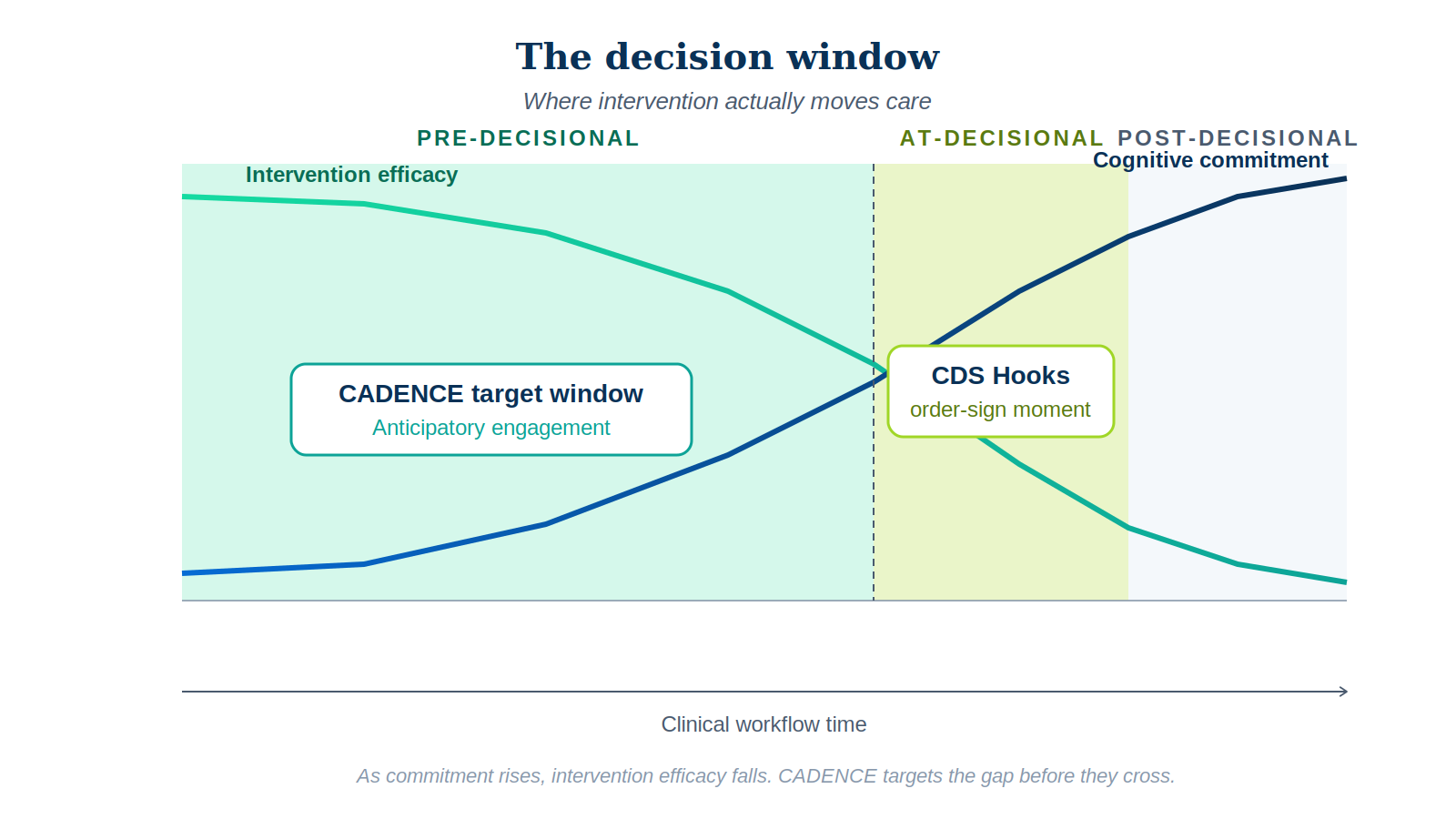

The decision window — where intervention actually moves care

Clinical decisions do not happen at a point in time. They happen across a window that opens when the clinician begins forming intent and closes when the order is signed. Across that window, two things move in opposite directions.

Cognitive commitment to a path rises monotonically. As the clinician reviews evidence, considers options, and narrows toward a choice, the cost of switching paths rises sharply. By the time the order is being placed, the clinician has constructed a working hypothesis and is now executing on it — not deliberating it. Intervention efficacy falls in mirror image. An AI suggestion presented at intent-formation lands as navigation. The same suggestion presented at order-sign lands as disruption requiring the clinician to undo cognitive work already completed.

The crossing of these two curves is the decision window. It defines three operationally distinct zones:

- Pre-decisional. Clinician is gathering information and forming intent. High malleability, low commitment. The CADENCE target zone.

- At-decisional. Order being placed, commitment forming. Moderate malleability, high commitment. The zone where CDS Hooks and most existing CDS systems intervene.

- Post-decisional. Order signed, commitment locked. Useful only for audit, retrospective surveillance, and systemic learning — almost never for changing the immediate decision.

The highest-leverage intervention zone is before the EHR knows the clinician is making a decision.

This single reframing drives the entire architecture below.

CADENCE — a seven-pillar architecture

CADENCE is built around seven pillars. The first four govern the clinician-facing surface. The last three govern system integrity, learning, and defensibility. No pillar is optional. A recommendation that ignores any one of them — for instance, an output without a coupled action, or a model invocation without a governance trace — is not a CADENCE recommendation. It is the kind of noise the workforce has correctly learned to ignore.

1. Contextual phase awareness

The system must know where the patient is in the journey before it speaks. Triage decisions, rounding decisions, order-composition decisions, handoff decisions, discharge-planning decisions, and post-discharge surveillance decisions all carry different rhythms, different cognitive loads, and different appropriate intervention modes. A 30-day readmission risk score during morning triage is mistimed; the same score during late-day discharge planning is precisely timed.

2. Anticipatory window targeting

Rather than waiting for order-select to fire, the system predicts — from clinician behavior, note text, lab patterns, and phase — that a decision is forming, and engages in the pre-decisional zone. Two technical approaches make this tractable. Behavioral pre-cursor detection works on the live note buffer: text patterns (“considering antibiotics,” “may need imaging”), repeated lab-result openings, and narrowing differentials are signals that intent is forming. Phase-conditioned priors handle the inevitable: a DVT prophylaxis decision within four hours of admission, a serious-illness conversation prompt at a high-mortality oncology visit. The recommendation is pre-staged, in ambient mode, where the clinician will arrive — not fired as an interrupt when they get there.

3. Decision-coupled action affordance

This is the most under-respected pillar in current CDS. Every CADENCE output must couple to a concrete, orderable, defensible action — a pre-filled order, a one-click acceptance with the clinician retaining final responsibility, a launch link to a SMART on FHIR app, or a structured documentation template. Pure information without affordance is what the literature politely calls “education” and what frontline clinicians correctly identify as noise.

4. Evidence-graded explanation

Every recommendation carries an explanation graded to the decision’s stakes and the clinician’s current bandwidth. The recent randomized work on AI confidence in clinical decision support is unambiguous: high-confidence explanations drive overreliance on cases where the AI is wrong; low-confidence explanations slow the clinician without changing accuracy. Explanation depth must be calibrated, not maximized. CADENCE specifies four tiers — glance-grade (a single phrase), sentence-grade (one-sentence justification), card-grade (structured features and uncertainty), and open-grade (full SMART app launch with counterfactuals). The tier is selected by Pillar 5, not by the model.

5. Nuanced bandwidth calibration

The clinician’s available cognitive bandwidth is not constant. It varies by phase, by patient complexity, by census, by recent interruption load. CADENCE estimates bandwidth in real time and degrades gracefully. The operational expression is the four-mode bandwidth ladder:

- Ambient — always-on background presence, surfaced only on hover or panel open. Used for low-stakes signals during high-load moments.

- Whisper — passive prompt in a peripheral region of the UI. Easily ignored, no modal interruption. Used when bandwidth is moderate and the suggestion is genuinely helpful but not safety-critical.

- Surface — graded interruption with a decision-coupled action card. Costs an attention switch. Reserved for clear pre-decisional opportunities or moderate-stakes safety nudges.

- Block — hard modal stop. Reserved exclusively for confirmed hard safety thresholds. The cardinal rule is that block mode is rare; if it fires more than ~1% of the time, the targeting model is broken.

6. Closed-loop effect surveillance

The most consequential thing existing CDS does not do is track whether its recommendations actually moved care. CADENCE makes this a first-class architectural requirement. Every recommendation carries a four-state lifecycle — presented → seen → acted-on → outcome. The acted-on state distinguishes accepted, modified, dismissed, and dismissed-with-rationale. The outcome state, measured at the right horizon (immediate, 24h, 30-day), is back-propagated as supervisory signal to the targeting model. Two virtuous loops emerge: the system learns which clinicians find which recommendations useful in which phases, and systematic dismissals by senior clinicians surface to clinical governance for review long before they show up in a postmarket safety event.

7. Embedded governance trace

Every CADENCE invocation produces a structured trace — phase inferred, decision-window estimate, model invoked, model uncertainty, explanation tier, bandwidth mode, action presented, clinician response, linked outcome, regulatory category. The trace serves three purposes simultaneously. It is the audit artifact required under ONC’s HTI-1 final rule on predictive Decision Support Interventions. It is the defensibility artifact when a recommendation is implicated in a downstream safety event. And it is the training signal for the closed-loop pillar above.

This is where CADENCE meets PRAXIGOV™ — the governance framework I have been building under NextBrightPath for regulated agentic AI. PRAXIGOV produces Workflow Intermediate Representations (WIRs) that encode regulation, use case, and audit obligations into a runtime-executable form. CADENCE is the delivery layer that consumes those WIRs and ensures every recommendation produced at runtime traces back to its governing WIR. PRAXIGOV is design-time governance. CADENCE is run-time delivery. Together, they form a complete stack.

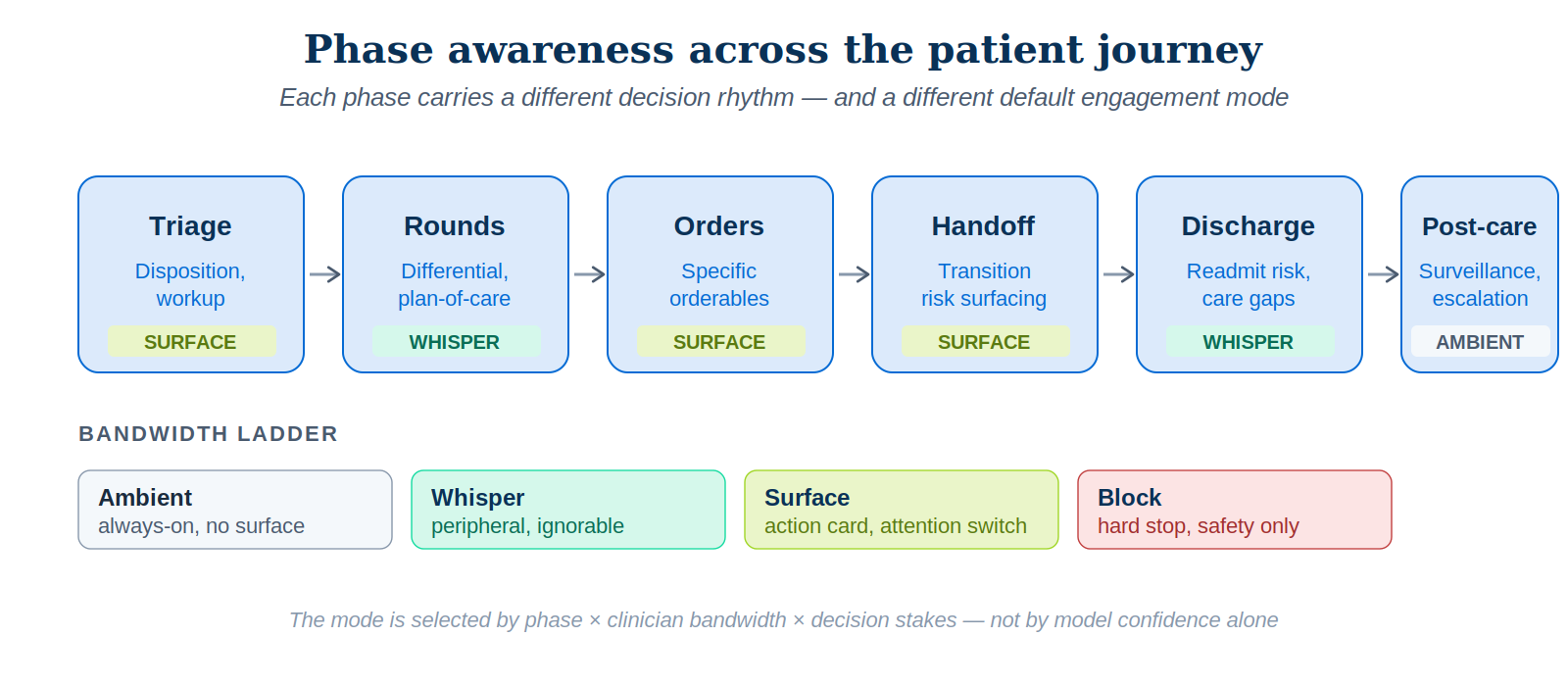

Phase awareness across the patient journey

The six phases of the inpatient journey each warrant their own engagement profile, because each carries a different decision rhythm and a different appropriate default mode.

| Phase | Dominant decisions | Default mode | Pre-decisional signal |

|---|---|---|---|

| Triage | Disposition, immediate workup | Surface | Triage scoring, vitals trajectory |

| Rounds | Differential, plan-of-care | Whisper / Ambient | Note patterns, problem list |

| Orders | Specific orderables | Surface (occasionally Block) | Order-set selection, narrowing |

| Handoff | Transition risk surfacing | Surface | I-PASS structure, omitted items |

| Discharge | Readmit risk, gaps in care | Whisper rising to Surface | Discharge-readiness signals |

| Post-care | Surveillance, escalation | Ambient | Trend monitoring |

Even within a single decision class, phase awareness matters more than model accuracy. The Eindhoven ICU workflow study (van Genuchten et al., 2025) demonstrated that the decision to discharge an ICU patient is actually made at four distinct moments per day — morning handover, morning rounds, nurse consultation, evening handover. A discharge-readiness model that fires only at one of those moments is mistargeted three times out of four. Phase awareness and anticipatory targeting are exactly what CADENCE is designed to handle.

A worked example — medication reconciliation at admission

Consider a common scenario. An older patient is admitted for community-acquired pneumonia. Their pre-admission medication list includes a beta-blocker, an SGLT2 inhibitor, an ACE inhibitor, and warfarin. The admitting hospitalist must reconcile.

Under conventional CDS

The hospitalist opens the admission order set. Drug-drug interaction alerts fire when the antibiotic is selected (warfarin interaction). A reminder fires on continuation of warfarin (bleeding risk). The hospitalist overrides both — warfarin’s risk-benefit is not for the alert engine to decide. The SGLT2 inhibitor decision is made silently; the model is never asked. Twelve hours later, the patient develops euglycemic ketoacidosis. The signal was in the literature. It was not surfaced because no CDS rule fired on it.

Under CADENCE

Pillar 1 infers admission phase. Pillar 2 predicts that medication reconciliation is forming as the hospitalist opens the home medication list and pulls in the admission order set. In whisper mode, a small panel adjacent to the home medication list flags the SGLT2 inhibitor with a sentence-grade explanation: “Risk of euglycemic DKA in acute illness — consider hold.” The whisper has a single-click action affordance: a pre-staged hold order for the duration of the acute illness, with auto-resume on discharge. The warfarin-antibiotic interaction is not surfaced as a redundant alert; Pillar 5 suppresses it on grounds of repeat-encounter low signal. Pillar 7 records the whole interaction. Pillar 6 observes the patient’s smooth course and back-propagates positive supervisory signal — or, if the hospitalist had dismissed the recommendation, would log the dismissal with the actual outcome attached.

The architectural difference is not subtle. Under conventional CDS, an in-the-literature signal failed to reach a decision moment because no rule existed. Under CADENCE, the phase and anticipatory pillars predicted the moment, the decision-coupling pillar staged a one-click action, the bandwidth pillar kept the engagement at whisper rather than block, and the closed-loop pillar learned from the outcome. Same model. Same data. Different architecture. Different result.

Why the architecture is regulatorily defensible

CADENCE is designed for compatibility with current and emerging regulatory expectations for clinical AI in the United States, with clear extensibility to the EU AI Act and EMA Reflection Paper expectations.

The FDA 2026 CDS guidance sharpened the framing of time-critical decision making and the requirement that clinicians be able to independently review the basis of a CDS recommendation. CADENCE’s evidence-graded explanation pillar and embedded governance trace satisfy this directly — the trace contains the basis for review, and the explanation tier is selected to support, not replace, independent clinical review.

The ONC HTI-1 final rule on predictive Decision Support Interventions requires transparency and risk management for predictive DSIs. CADENCE’s governance trace logs the model invoked, its uncertainty, and the dataset characteristics under which it was validated. The closed-loop surveillance pillar provides the postmarket performance monitoring HTI-1 anticipates.

The FDA 2025 AI/ML draft guidance for medical devices — risk-based credibility framework, lifecycle management, predetermined change control plans, postmarket monitoring — fits naturally into the governance trace and surveillance pillars. Drift detection and labeled real-world case capture become artifacts of the closed-loop pillar rather than separate engineering work.

Most clinical AI teams I have advised treat governance as something the legal or compliance group handles after the model works. That sequence is exactly backwards. By the time a model is in production, the architectural decisions that determine whether it can be governed have already been made — and unmaking them is expensive.

This is the same lesson I keep arriving at across the regulated industries I work in. Governance is not a layer above the system. It is the substrate the system grows from. PRAXIGOV™ is the design-time expression of that conviction. CADENCE is the run-time expression. The two are not optional add-ons to clinical AI. In a properly built stack, they are the parts that make it clinical AI rather than a science project.

What it takes to build this

CADENCE is not a product. It is an architecture, and a fairly demanding one. A serious implementation requires five components — none of them speculative, all of them buildable on the standards a modern health system has already invested in.

- A workflow-phase inference service — typically a lightweight stream processor over EHR events, location signals, and time-of-day, producing a continuously-updated phase estimate.

- A pre-decisional signal detector — typically NLP on the active note buffer plus EHR navigation telemetry, producing an estimate of which decisions are forming.

- A bandwidth estimator — combining recent interruption load, current census complexity, and time-of-day to produce a clinician-state estimate that drives engagement-mode selection.

- A decision-coupling layer — every recommendation paired with a SMART on FHIR action or pre-staged orderable; rejected recommendations capable of dismissal-with-rationale.

- A trace store — append-only, queryable, schemaed for both surveillance and audit.

None of this requires fundamentally new infrastructure. CDS Hooks and SMART on FHIR provide the integration plumbing. FHIR R4/R4B provides the data substrate. The components are within reach of any health system that has invested seriously in its EHR integration platform.

The hard part is organizational, not technical. The hard part is the discipline to not ship AI features that violate any of the seven pillars — and that requires governance backbone of the kind PRAXIGOV is designed to enforce upstream of any of this work.

Closing thought

The dominant failure mode of clinical AI is mistimed information. Models with publishable accuracy are routed through architectures designed for at-decisional alerting, and consequently produce noise the clinical workforce has correctly learned to ignore. The remedy is not better models. The remedy is a delivery architecture that targets the pre-decisional window, couples every output to action, calibrates to clinician bandwidth, learns from outcomes, and remains regulatorily defensible.

CADENCE is one such architecture. It is not the last word — frameworks rarely are — but it specifies an integration discipline that is plainly absent from how AI is being deployed into clinical workflows today. Adopting it, even partially, would change the conversation from “the model works in retrospect but nobody uses it” to “the model is changing care because it is reaching the right clinician at the right moment with the right action.”

That is the only conversation worth having.