The industry is moving fast on capability. It is moving slowly — dangerously slowly — on the one thing that determines whether that capability can be trusted.

There is a pattern repeating across every industry deploying agentic AI right now. An organization builds a multi-agent workflow. It works. Tasks get completed. Decisions get made. Reports get generated. Leaders declare a win.

Then someone asks: Can you show me why the system made that decision? Or: What data was the agent looking at when it produced that output? Or — the one that ends careers in regulated industries — Who is accountable for this?

The room goes quiet.

That silence is not a technology problem. It is a governance problem. And it is, right now, the most consequential unresolved problem in enterprise AI.

What Agentic AI Actually Changes

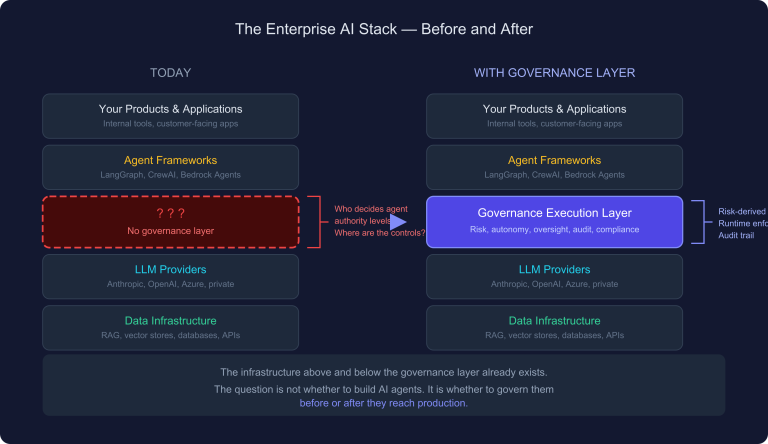

To understand why governance is non-negotiable for agentic AI, you first have to understand what makes agentic AI structurally different from every prior generation of automation.

Traditional automation executes a defined sequence. A robotic process automation bot does exactly what it was programmed to do, in exactly the order it was programmed to do it. The logic is fixed. The deviation surface is small. Governance — in the form of change control and audit logs — is manageable because the system’s behavior is deterministic and bounded.

Agentic AI is none of those things.

An agentic system reasons about a goal, decomposes it into tasks, selects tools, sequences actions, evaluates intermediate results, and adjusts its approach — without a human directing each step. Multiple agents can operate in parallel, handing off to each other, each operating within its own context window, each making decisions the next agent in the chain will act on.

This introduces four properties that fundamentally change the governance challenge:

Emergent decision paths. The sequence of actions is not predetermined. It emerges from the agent’s reasoning at runtime. Two runs on the same input may produce different paths to the same output — or different outputs entirely. You cannot govern a system by auditing its code when the behavior is not in the code.

Compounding errors. In a multi-agent chain, a flawed decision by Agent A does not stay with Agent A. It becomes the input for Agent B, which builds on it, and passes the compounded error to Agent C. By the time the error surfaces in the output, it may be several reasoning steps removed from its origin. Tracing it back is not straightforward. Preventing it requires governance at every handoff, not just at the output.

Autonomous tool use. Agentic systems write to databases, call APIs, trigger workflows, send communications, and execute transactions. This is not a future risk. It is the current state of production deployments. When an agent with write access to a system makes a decision based on flawed reasoning, the consequence is not a wrong answer in a chatbox. It is a wrong record in a database that downstream systems depend on.

Human-in-the-loop degradation. Most organizations deploy agentic AI with an intention toward human oversight. What happens in practice is that humans approve agent outputs at the rate the system generates them — which is far faster than any human can review substantively. The human in the loop becomes a rubber stamp. The governance control that was designed to catch errors is present on paper and absent in substance.

These four properties do not make agentic AI dangerous by default. They make ungoverned agentic AI dangerous by design.

The Governance Gap Is Not What Most People Think It Is

When organizations recognize the governance challenge, they typically respond in one of three ways. All three are insufficient.

The policy response. Write a responsible AI policy. Define principles — fairness, transparency, accountability, explainability. Publish it. Train employees on it. This is necessary. It is nowhere near sufficient. A principle that says “AI decisions should be explainable” provides no mechanism for ensuring that explanation is captured, structured, retrievable, and defensible when the regulator or the board asks for it.

The monitoring response. Add a monitoring layer. Track model drift. Alert on anomalous outputs. Log API calls. This is also necessary and also insufficient. Monitoring tells you that something went wrong after it went wrong. It does not prevent consequential decisions from being made on flawed reasoning. And in regulated environments — pharmacovigilance, financial reporting, healthcare, insurance — after the fact is often too late.

The human-in-the-loop response. Require human approval before consequential actions. This is the right instinct with a fatal implementation flaw. Human-in-the-loop as a governance mechanism only works if the human’s review is substantive, structured, documented, and calibrated to the actual risk level of what is being reviewed. A human clicking approve in under 60 seconds on a flagged AI output is not a control. It is liability redistribution.

None of these responses address the actual governance gap. The actual gap is this: most organizations deploying agentic AI have no formal specification of what the system is governed to do, under what constraints, with what human decision standards, and how every consequential decision will be reconstructed after the fact.

That is not a gap in monitoring. It is a gap in governance architecture.

What Governance Actually Requires

Governance for agentic AI is not a layer on top of the workflow. It is the condition under which the workflow is permitted to operate. That distinction is precise and consequential.

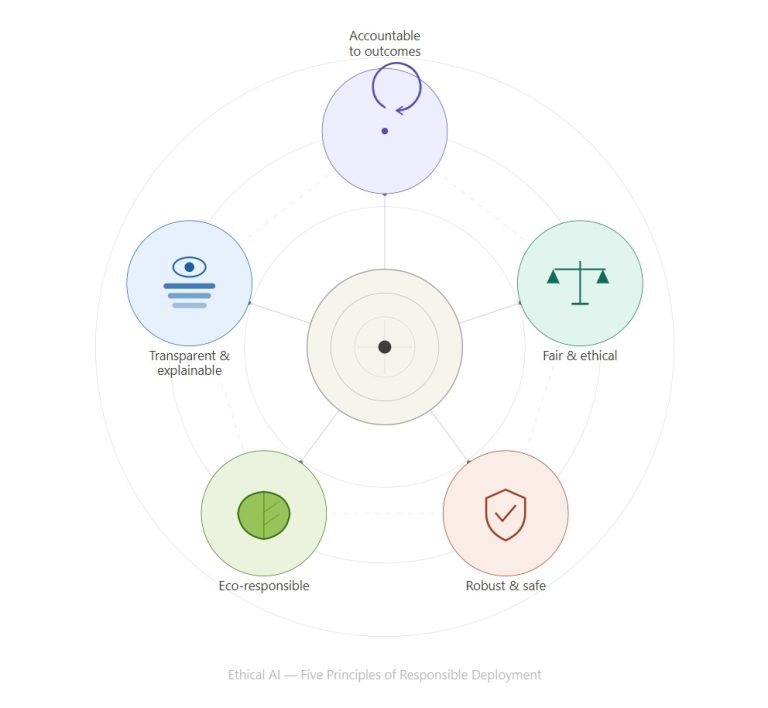

There are five properties a governed agentic system must demonstrate. Not aspirationally. Operationally.

1. Every consequential decision is traceable to data.

Not to a model. Not to a prompt. To the specific data the agent was operating on, at the specific moment it made the decision, under the specific configuration that was active.

This requires that every state transition in the agent workflow produces an immutable record: who or what acted, what data they were looking at, what configuration governed their behavior, what decision was made, and what the prior state was. Not as a log file that captures timestamps. As a structured audit record that can reconstruct the full decision context on demand.

The test is simple: pick any consequential output your agentic system produced six months ago. Without asking anyone who was involved, can you reconstruct — from your audit infrastructure — exactly why the system produced that output, what data drove it, and what human reviewed it? If the answer is no, you do not have a traceable system. You have a system that generates outputs and hopes nothing goes wrong.

2. Agent authority is bounded, not assumed.

Every agent in the system has a defined permission boundary: what it is authorized to read, what it is authorized to write, what it is authorized to trigger, and what requires human authorization before it can proceed.

The distinction between capability and authorization is the one most organizations collapse. An agent that is technically capable of executing a transaction is not automatically authorized to execute it. Authorization requires a defined condition — a human sign-off, a gate condition met, a threshold not exceeded — and a record that the condition was satisfied.

Permission boundaries are not set by the engineering team based on what seems reasonable. They are set by the governance specification, owned by the process owner, version-controlled, and change-managed. When a permission boundary changes — because a process changes, a risk profile changes, or a regulation changes — the change goes through the same governance process as any other material process change.

3. Handoffs between agents are governed, not assumed.

In a multi-agent system, the most dangerous moments are the handoffs. This is where context is lost, where errors compound, where a flawed intermediate output becomes the input for the next stage of reasoning.

Every handoff requires a gate: a formal test that must pass before the downstream agent proceeds. The gate tests the integrity of what is being passed — completeness, validity, conformance to the expected schema — and the conditions under which the downstream agent is permitted to operate on it.

Gates are not validation checks in the engineering sense. They are governance controls in the process sense. The difference: a validation check tests whether data is well-formed. A governance gate tests whether the conditions for a legitimate decision have been met. Those are not the same thing.

4. Human decisions are structured, not free-form.

The most underbuilt control in enterprise agentic AI is the human interaction layer. Organizations focus enormous effort on agent design, prompt engineering, and output formatting. They give almost no thought to how the human reviewer’s decision is captured.

A human who reviews an agent output and types “looks good” in a comment field has not made a governed decision. They have expressed an opinion. The governance record for that review — what they looked at, what they considered, what they found, what they decided, and why — is absent.

Governed human interaction means that the review interface is structured: the reviewer must engage with specific elements of the output, confirm specific conditions, document specific reasoning, and provide a decision that is machine-readable and auditable. The system measures whether the review was substantive — by time, by completeness of engagement, by coverage of required elements.

This is not about distrust of reviewers. It is about building a record that can withstand scrutiny. In any high-stakes environment, a decision is only as defensible as its documentation.

5. Human review capacity is a governing constraint, not a workload metric.

This is the governance failure that is most invisible until it is catastrophic.

Agentic AI generates outputs at machine speed. Humans review at human speed. The gap is not a scheduling problem. It is a governance problem. When the volume of outputs requiring human review exceeds the capacity for substantive review, one of two things happens: either the review becomes cursory — the rubber stamp problem — or items accumulate without review and decisions are effectively made without oversight.

Neither outcome is acceptable in a governed system. The governed response is to treat reviewer capacity as a hard constraint on system output. The system does not generate more outputs requiring human review than can be reviewed substantively within the required time frame. When capacity is constrained, the system holds — with a documented hold reason — rather than proceeding with inadequate review.

This requires knowing, explicitly, what substantive review of each output type requires in terms of time and expertise. That is a governance specification, not an estimate. It has an owner. It is reviewed periodically. And it is enforced.

The Regulated Industry Imperative

Everything above applies to any organization deploying agentic AI for consequential purposes. In regulated industries, it is not optional. It is the condition of operating.

Consider pharmacovigilance. An agentic signal detection system processes adverse event data, identifies potential drug safety signals, and routes them for human assessment. If the system cannot demonstrate — to a regulatory inspector — that every signal was assessed under a documented, version-controlled methodology, that every human assessment was structured and timed, that every regulatory obligation was triggered automatically at the correct threshold, and that the full decision history is reconstructable on demand — the system is not compliant. It does not matter how sophisticated the detection algorithms are.

Consider financial reporting. An agentic system processes manual journal entries, applies fraud detection logic, routes for approval, and feeds into period-end close. If the system cannot demonstrate that segregation of duties was enforced on every entry, that every approval was substantive, that every exception was documented with compensating controls, and that the full JE lifecycle is auditable without human narration — the system cannot support a SOX attestation. The capability is irrelevant if the governance is absent.

This pattern holds in insurance claims, healthcare authorization, legal contract review, and every other domain where decisions have material consequences and accountability is not optional.

The organizations that will win in these domains are not the ones with the most capable AI. They are the ones with the most governed AI — because governed AI is the only AI that can be deployed at scale in environments where trust is the baseline requirement.

The Governance Deficit Is a Strategic Risk

There is a tendency to treat AI governance as a compliance cost — something you do to satisfy auditors and regulators, not something that creates value. That framing is wrong in two directions.

First, the downside risk is not the cost of compliance. It is the cost of a governed system failing in a high-stakes environment because governance was treated as overhead. A drug safety signal missed because reviewer capacity was overwhelmed and no throttle existed. A fraudulent journal entry posted because the approval was a rubber stamp. A healthcare authorization denied — or granted — based on flawed agent reasoning that no one can reconstruct or defend.

These are not hypothetical risks. They are the predictable consequences of deploying capable but ungoverned agentic systems at scale in consequential environments.

Second, the upside of genuine governance is not just risk reduction. It is the ability to deploy agentic AI in contexts where it otherwise cannot go. Organizations with mature governance infrastructure can move faster, not slower — because they have the audit trail that satisfies the regulator, the structured human review that satisfies the board, and the traceable decision record that satisfies the inspector. Governance is the access pass to the highest-value, highest-trust applications.

The organizations treating governance as a constraint are protecting yesterday’s deployment profile. The organizations treating governance as infrastructure are building the capability to operate tomorrow’s.

What the Field Needs to Stop Doing

Stop calling a system prompt a governance framework. Instructions to an agent about how to behave are not governance. They are aspirations encoded as text. They can be circumvented by sufficiently complex inputs, by model drift, by context window limitations, and by the compounding effects of multi-agent reasoning chains.

Stop treating human-in-the-loop as a binary. The question is not whether a human is in the loop. The question is whether the human’s engagement is substantive, structured, documented, and calibrated to the risk. A human in the loop who reviews 200 outputs in two hours is not a governance control.

Stop separating AI capability development from governance development. The organizations that build the agent first and bolt on governance afterward will spend years retrofitting controls that should have been foundational. Governance is not a phase that follows deployment. It is a precondition for deployment in any environment where the decisions matter.

Stop assuming that because the output is correct, the process was governed. A system can produce correct outputs through an ungoverned process. The problem surfaces when it produces an incorrect output — and you cannot explain why, cannot reconstruct the decision, and cannot demonstrate that any human reviewed it substantively. Correct outcomes do not validate governance. Governance is the structure that makes outcomes defensible regardless of whether they are correct.

The Ask

The organizations deploying agentic AI in 2025 and 2026 are making choices — mostly by default, rarely by design — about what governance infrastructure they will build. Those choices will determine whether agentic AI in their environments is a sustainable competitive advantage or a future liability.

The technology is moving faster than the governance thinking. That gap needs to close. Not in the next generation of models. Now. In the systems being deployed today, in the workflows being automated this quarter, in the decisions being handed to agents this week.

Governance is not what you do when something goes wrong. It is the reason you can prove something went right.