OpenOvation · Thought Leadership in AI & Innovation

the Algorithm.

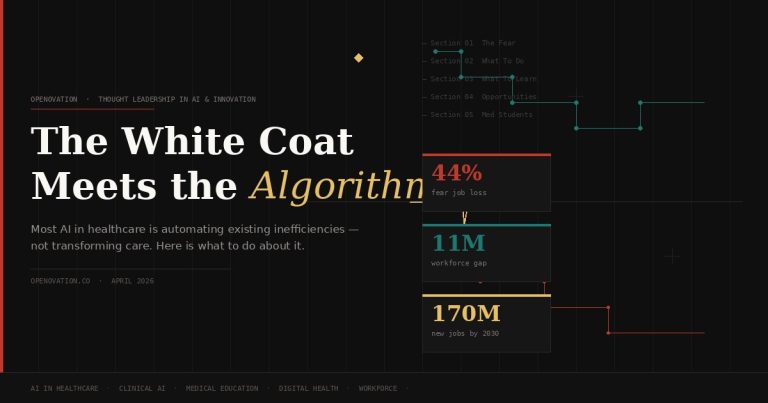

The anxiety is real and it is data-backed. Forty-four percent of healthcare workers fear AI will take their jobs — higher than the 35% cross-sector average. But fear tends to target the wrong thing. The greater risk is not replacement. It is that clinicians who do not understand AI well enough to evaluate it, challenge it, or shape it will progressively cede authority over decisions that directly affect their practice. Not their job titles. Their professional agency.

This article is structured as a practitioner’s guide, not a motivational overview. Five sections, each with a specific agenda: why the concern is legitimate, what to do about it now, what to learn and in what sequence, where the real opportunities are, and what students entering medicine today need to prioritize that the current curriculum does not yet require.

Acknowledge what is already happening. Medical transcription is 99% automated. Forty percent of medical coding is projected to be automated in 2025. Radiology technicians performing routine scans face documented displacement risk by 2030. These are not projections built on theoretical capability — they are production deployments in health systems operating today.

The Bureau of Labor Statistics’ 2025 AI impact analysis is clarifying on one point: no direct patient care roles made its list of AI-displaced occupations. The displacement risk is concentrated in healthcare-adjacent functions — coding, billing, administrative coordination, and roles built primarily on data retrieval and documentation. That is important context. It does not mean clinical roles are immune. It means the timeline and mechanism of impact is different.

For clinical roles, the more precise concern is what researchers call automation bias — the documented tendency for practitioners to defer to algorithmic outputs rather than interrogate them. FDA-cleared AI tools are already embedded in imaging workflows, clinical decision support systems, and EHR-integrated early warning alerts. When a clinician consistently accepts these outputs without critical evaluation, two things happen: patient risk accumulates silently, and the clinical judgment that took years to build begins to atrophy from disuse.

That concern reflects a real dynamic — not because AI will devalue clinical expertise, but because healthcare systems will increasingly use AI outputs to justify staffing and scope decisions. The clinician who cannot articulate why an AI recommendation is wrong, or when a model’s training population does not resemble their patient panel, is operating at a structural disadvantage in those conversations.

The core risk is not job loss — it is loss of clinical authority. As AI-generated recommendations become standard in clinical workflows, the practitioner who cannot evaluate them critically will find their professional judgment progressively overridden by a system they do not understand.

The SHRM 2025 Automation Survey adds necessary precision: 63.3% of all jobs contain non-technical barriers that prevent complete automation — regulatory requirements, patient preferences for human contact, ethical accountability structures, and liability frameworks. Healthcare has more of these barriers than most industries. That is a structural protection. But it is not a reason to be passive. Those barriers will be eroded, negotiated, and worked around over time. The practitioners who understand that dynamic are the ones who will shape how it unfolds — rather than react to decisions already made.

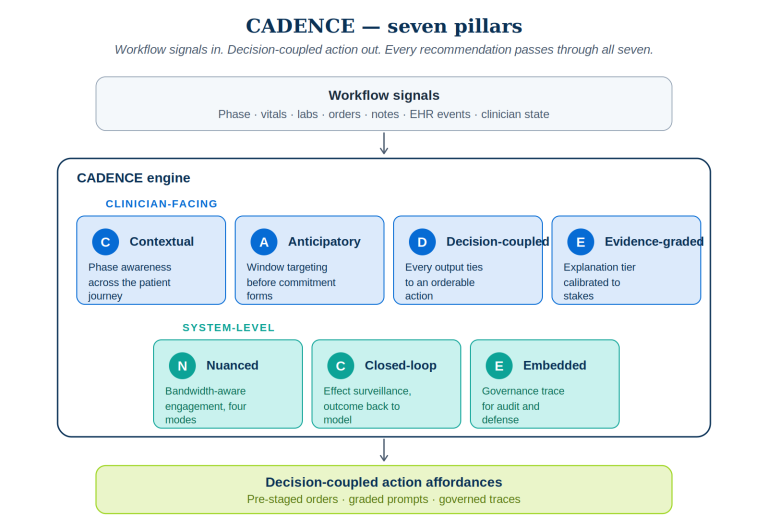

The instinct to wait for institutional AI training programs is a mistake. Most health system AI education initiatives are a year or more behind the deployment curve. By the time your hospital offers a formal module on a tool, that tool will already be making decisions in your workflow. The practitioners who will have influence over how AI is implemented are the ones who built their understanding before the RFP was issued — not after the vendor contract was signed.

The most common mistake healthcare professionals make when approaching AI upskilling is adopting the wrong reference frame. This is not a technology education problem. It is a clinical competency extension. You are not learning to build AI systems. You are learning to deploy, evaluate, and govern them within a professional context where the consequences of errors are measured in patient outcomes.

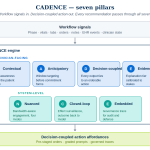

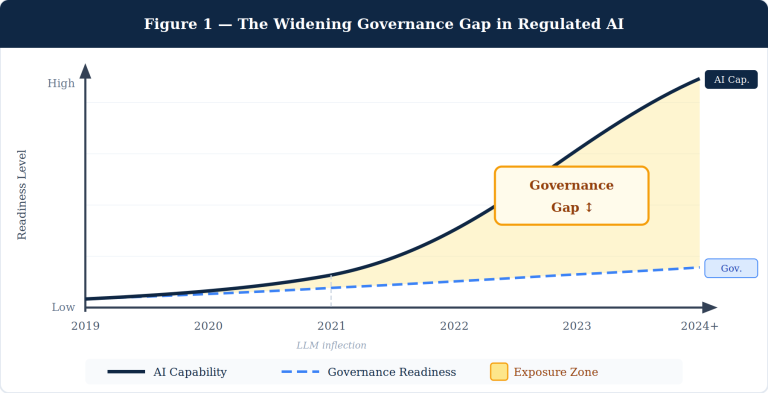

A 2025 systematic review in PMC examining AI skills for healthcare professionals identified three core domains: technical literacy, procedural competence in AI-assisted workflows, and the ethical and governance reasoning to know when AI recommendations should be overridden. The proportion of each domain required depends on role and specialty — but the foundational tier is universal.

| Skill Domain | Tier | What to Learn | Why It Matters |

|---|---|---|---|

| AI Conceptual Literacy | All Clinicians | How ML models are trained, training data composition, confidence scores, hallucination risk in clinical outputs | You cannot evaluate tools you don’t understand. Non-negotiable baseline. |

| Critical Appraisal of AI Evidence | All Clinicians | Reading AI validation studies: AUC, sensitivity/specificity, external validation, subgroup performance | Vendors cite headline numbers. You need the questions that reveal where those numbers break down. |

| Clinical Decision Support Navigation | All Clinicians | AI recommendations as hypotheses to test, not conclusions to accept. Override protocols and documentation. | Automation bias is a patient safety issue already documented in live clinical systems. |

| Algorithmic Bias & Equity Reasoning | All Clinicians | How dataset bias translates to clinical inequity. Identifying when a model’s training population doesn’t match your patients. | Biased AI systematically underperforms for underserved populations — the exact patients where errors are most consequential. |

| Specialty AI Applications | Specialist Track | FDA-cleared AI tools in your specialty. Imaging AI, genomics decision support, medication optimization. | The specialist who knows which tools are validated, for whom, and under what conditions holds authority that others lack. |

| Health Data Governance | Specialist Track | HIPAA in AI contexts, de-identification standards, federated learning, patient consent for AI training data. | Patient trust is built or destroyed by how data is handled. This knowledge grants standing in governance decisions. |

| AI Procurement & Vendor Evaluation | Leadership Track | Vendor assessment frameworks, clinical validation requirements for procurement, implementation readiness. | CMOs and CMIOs who can’t evaluate AI vendor claims are making buy decisions on marketing materials. That’s a patient safety issue dressed as a procurement process. |

| AI Governance & Oversight Architecture | Leadership Track | Human-in-the-loop design, AI audit frameworks, FDA Digital Health Center, EU AI Act, CMS AI programs. | Deploying AI without governance creates accountability gaps that will surface as adverse events. Leaders who understand this prevent them. |

One competency deserves emphasis beyond any table: the capacity for AI-independent clinical reasoning. As AI-generated recommendations become pervasive, the practitioner who retains the judgment to step outside them — to recognize when the model is wrong, when the presentation is atypical, when the patient in front of them defies the statistical pattern — becomes both rarer and more valuable. The deep clinical intuition built from years of direct patient contact is not obsolete in an AI environment. It is the primary check on AI error. Preserve it deliberately.

The World Economic Forum projects 170 million new jobs globally by 2030, against 92 million displaced — a net positive of 78 million positions. Healthcare roles with AI augmentation are among the explicit growth drivers: nurse practitioners are projected to grow 52% from 2023 to 2033. The constraint is not that the opportunities do not exist. It is that most healthcare professionals are not positioned to compete for them because they have not built the complementary AI fluency those roles require.

Where to look — specific and actionable:

A 2025 PMC viewpoint on AI in medical education states it directly: most medical students currently lack understanding of the basic technical principles underlying AI, and medical education accreditation standards typically exclude AI competencies. That is a curriculum gap and a first-mover opportunity simultaneously. The student who self-educates on AI during training will enter residency with a competency that most attending physicians do not yet hold. That asymmetry is time-limited. Exploit it now.

The healthcare system you will enter at full clinical capacity — in the early 2030s — will have AI embedded in diagnostic workflows, treatment planning, discharge management, and potentially in surgical assistance. The question is not whether you will work alongside AI. It is whether you will understand it well enough to use it safely, challenge it when it is wrong, and shape how it is deployed in your practice environment.

These are not electives. They are the structural foundations of a clinical career that retains authority and relevance through the AI era — and the ones least likely to be handed to you by a standard medical education pathway.

1. Build real biostatistics and research methodology depth. Every AI tool you will evaluate in clinical practice is validated through a study. The clinician who can critically read that study — who understands AUC, sensitivity, specificity, NPV, subgroup performance gaps, and the implications of a homogenous training cohort — holds evaluative authority that algorithms cannot replicate. This is not statistical theory. It is clinical self-defense.

2. Get direct research experience in a medical AI lab during training. A summer in a clinical AI research environment at your institution, or an elective with research groups at Stanford HAI, Google Health, Microsoft Research Health AI, or a comparable academic center, is worth more than any certificate course. Being a practitioner who has actually been inside the development and validation process changes how you use and evaluate these tools for the rest of your career.

3. Develop the clinical competencies that AI cannot replicate — with the same rigor you bring to science. Communication under uncertainty. Ethical reasoning when the guidelines run out. The capacity to hold a patient’s values in view when clinical evidence is ambiguous. These are not soft skills — they are the irreplaceable core of clinical practice. Build them deliberately.

4. Choose your specialty with AI exposure clearly in your field of vision. Radiology, pathology, and genomics medicine are experiencing the most concentrated AI development — and will need the most AI-fluent clinicians to evaluate, govern, and safely deploy those tools. Do not avoid AI-exposed specialties out of displacement anxiety. Enter them with the AI literacy to lead rather than be led.

5. Build a professional intellectual presence now, not after residency. Write. Publish a perspective on a clinical AI paper you found important. Contribute to your institution’s AI working group or AMSA’s digital health chapter. The physician entering residency with a documented record of substantive AI engagement — not certificates, but actual thinking — is positioned for opportunities that the standard applicant pool cannot access.

Clinical Informatics Elective

Medical AI Lab Research

Health Equity & Algorithmic Bias

EHR & Clinical Data Standards

AI Ethics in Medicine

Human Factors in Clinical Systems

FDA Digital Health Regulation

The broader shift in medical education is a move from training physicians to memorize information toward training them to navigate, evaluate, and apply knowledge in context. AI can surface the current treatment protocol faster than any clinician can recall it. What AI cannot do is determine whether that protocol applies to this patient — with this comorbidity profile, in this social context, with these stated preferences and values. That contextual, patient-specific judgment is the core of clinical practice. Students who understand that are preparing for the right job.