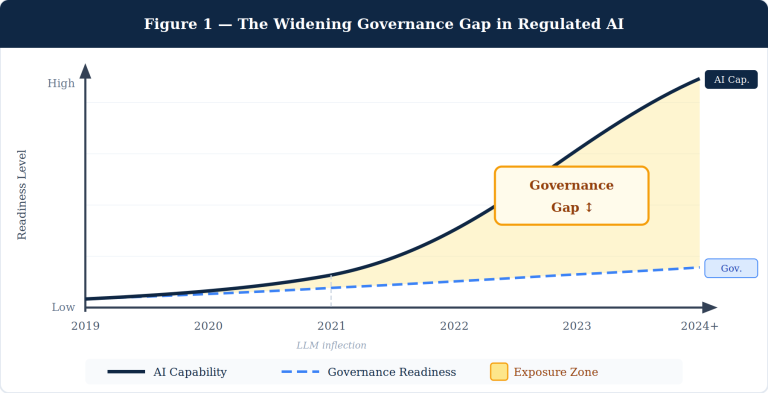

AI is no longer a research experiment. It is embedded in credit decisions, clinical pathways, hiring pipelines, and public services. The ethical question has shifted from whether to govern AI to how rigorously governance is enforced across the entire lifecycle — from design through decommission. Five principles define that standard. None are optional. None can be addressed in isolation.

1. Accountable to Outcomes

Accountability is the load-bearing wall of every other ethical principle. Without it, fairness commitments are aspirational and transparency obligations are performative.

AI governance extends across the entire AI lifecycle — from initial design through deployment and ongoing monitoring — and creates accountability structures that ensure human oversight remains central, even as AI systems handle increasingly complex tasks. That is the operative definition. Governance is not a sign-off at launch. It is a continuous obligation.

The practical implication: every AI solution requires a named human accountable for its outcomes. This includes a designated accountable human who knows they hold that role, understands the qualifications required for it, has authority to modify or stop the AI, and can transfer accountability in an orderly way. Dispersed accountability — where responsibility sits with “the team” or “the platform” — is the same as no accountability.

Dispersed agency in AI systems means that accountability must be shared across all players: firms, customers, hardware, software, designers, and developers. Conventional moral frameworks, which assign responsibility based on individual choices, do not map cleanly onto this distributed landscape. This mismatch is not abstract. It surfaces in real consequences — when a biased model produces a discriminatory output, no one is positioned to own it.

Specific AI policies must cover the entire model lifecycle: data acquisition, preparation, model development, validation, deployment, monitoring, and retirement. Roles must be assigned — AI ethics advisors, data scientists, business owners, legal and compliance officers, data stewards — with each party knowing their accountability obligations clearly.

The operative test: If your AI solution failed catastrophically today, could you identify — within an hour — who is responsible, what authority they have, and what remediation they would execute? If the answer is no, accountability is theater.

2. Fair & Ethical

Fairness in AI is not a static condition. It is an active practice that must be embedded at every stage of the model lifecycle — in data sourcing, feature selection, training, validation, and deployment monitoring.

The UNESCO Recommendation on the Ethics of Artificial Intelligence, endorsed by all 194 member states, centers on human rights and fundamental freedoms — including safety, fairness, nondiscrimination, privacy, and sustainability — and calls for comprehensive AI impact assessments to identify risks and benefits with ongoing monitoring.

The risks of neglect are systemic. If not carefully governed, AI can perpetuate and even amplify existing societal biases, leading to further inequality and discrimination. As AI systems become more autonomous, complex ethical questions arise about accountability for their actions, especially in critical domains like autonomous vehicles or healthcare.

The EU AI Act — the most enforceable governance framework currently in force — regulates AI systems based on risk tiers: unacceptable, high, limited, and minimal. It bans certain uses outright (social scoring, for example) and imposes strict controls on high-risk applications including healthcare and financial services. Organizations operating in regulated industries are not operating in a voluntary compliance environment. Enforcement mechanisms are real.

A widely-held view in AI ethics positions GenAI as a tool that should assist rather than replace human judgment, with accountability firmly placed on institutions rather than automated systems. Concerns remain that generative AI reinforces biases, exacerbates epistemic injustice, and centralizes power.

Privacy deserves separate emphasis. Autonomy — the right of individuals to understand how AI conclusions about them are reached and to contest those conclusions — is not a compliance checkbox. It is a precondition for public trust in AI-enabled systems. Fairness without autonomy is paternalism by algorithm.

The operative test: Can your organization demonstrate, with documented evidence, that your AI systems have been tested for disparate impact across protected classes, and that bias mitigation was applied before deployment — not after a complaint?

3. Robust & Safe

Safety in AI has two dimensions that are frequently conflated: technical robustness (the system performs reliably under real-world conditions) and non-maleficence (the system does not harm the people it serves). Both are necessary. Neither is sufficient alone.

The NIST AI Risk Management Framework describes seven characteristics of trustworthy AI: validity and reliability, safety, security and resilience, accountability and transparency, explainability and interpretability, privacy enhancement, and fairness with the management of harmful bias. Notably, NIST treats these as interdependent — not a menu from which practitioners choose.

Without proper oversight, AI implementations lead to biased outcomes, regulatory violations, data breaches, and erosion of stakeholder trust — consequences that can devastate both reputation and bottom line. The pattern is consistent across industries: the failures that create enterprise-level damage are rarely exotic adversarial attacks. They are models deployed before adequate validation, on data that did not represent the operational population, without monitoring in place.

Robust AI requires accounting for natural data drift within the operational environment compared to training data, documenting data provenance, and maintaining version control such that any auditor can determine which model version was in use at any given moment, which training data it relied on, and what outputs it produced. These are engineering requirements, not aspirational standards.

For agentic AI — systems that take sequential actions, use tools, and operate with limited real-time human supervision — the safety bar rises substantially. AI control systems designed to monitor autonomous agents and enforce ethical limits in real-time represent an emerging approach to agentic governance, including supervisory agents that can monitor and correct other agents’ actions. Robustness in agentic contexts requires fail-safe escalation paths, defined intervention thresholds, and tested rollback mechanisms.

The operative test: Has your AI solution been validated — not just tested — against real-world population data, with documented performance thresholds that trigger human review or system halt when breached?

4. Transparent & Explainable

Transparency is not about publishing model architecture. It is about giving affected individuals the information they need to understand, question, and reject AI-driven conclusions that affect them.

All seven leading national AI ethics frameworks studied in a 2025 Springer analysis emphasize the Model Development and Monitor stages, while significant gaps persist in ethical guidance for other lifecycle stages — including the point at which end users interact with AI outputs and need to understand them. That gap is where real explainability failures occur.

The UNESCO Ethical Impact Assessment covers the entire AI lifecycle, from initial design to post-deployment monitoring, and includes both procedural safeguards — transparency, auditability, stakeholder engagement — and substantive checks including fairness, nondiscrimination, and human rights protection.

Explainability must be calibrated to the audience. A radiologist asking why an AI flagged an anomaly needs a different explanation than a patient asking why their insurance claim was denied. The obligation is the same — to provide a meaningful, contestable account — but the form is context-dependent.

Without transparency and accountability structures, organizations risk creating “black box” systems that generate legal liability and ethical breaches while eroding stakeholder trust. In regulated industries, explainability is increasingly a legal obligation, not a design preference. The EU AI Act, SR 11-7 from the Federal Reserve, and FDA guidance on Software as a Medical Device all impose varying levels of explainability requirements on high-risk AI systems.

There is a second transparency obligation that receives less attention: communicating the limitations of AI solutions clearly and proactively. An AI that is 94% accurate is also 6% wrong. End users need to know that, and need to understand under what conditions performance degrades. Limitation disclosure is not a sign of weakness. It is a precondition for safe use.

The operative test: Can your end users — not your developers — describe in plain language what the AI concluded, why it concluded it, and how they would challenge it if they believed it was wrong?

5. Eco-Responsible

The environmental cost of AI is no longer speculative. It is measured, growing, and largely unacknowledged in the ethical discourse that governs most AI deployments.

The carbon footprint of AI systems in 2025 could reach between 32.6 and 79.7 million tonnes of CO₂ emissions, while the water footprint could reach 312.5 to 764.6 billion liters — roughly equivalent to the global annual consumption of bottled water.

By 2030, the current rate of AI growth would annually put 24 to 44 million metric tons of carbon dioxide into the atmosphere — the emissions equivalent of adding 5 to 10 million cars to U.S. roadways — and drain 731 to 1,125 million cubic meters of water, equal to the annual household water use of 6 to 10 million Americans.

The supply chain dimension compounds this. The carbon footprint of AI consists of embodied emissions from manufacturing IT equipment and constructing data centers, and operational emissions from electricity consumed by AI workloads. Scope 3 emissions — including embodied emissions — range from approximately one-third to two-thirds of overall lifetime emissions.

Making AI models more efficient is described by MIT researchers as the single most important action to reduce AI’s environmental costs. This translates into concrete decisions at the solution design level: model selection (a smaller fine-tuned model vs. a general-purpose frontier model), inference optimization, hardware efficiency, and workload scheduling to leverage renewable energy availability.

An actionable roadmap exists: smart siting of data infrastructure, faster grid decarbonization, and operational efficiency improvements could cut carbon impacts by approximately 73% and water impacts by 86% compared to worst-case scenarios. These are not incremental improvements. They are structural choices made at the point of AI solution architecture.

Eco-responsibility also has a governance dimension. Organizations that cannot report the energy consumption and carbon intensity of their AI workloads are not in a position to manage them. What is not measured cannot be minimized.

The operative test: Does your AI solution have a documented environmental impact profile — including compute hours, energy source, water consumption, and hardware lifecycle — and has that profile been considered in the deployment decision?

The Integration Imperative

These five principles are not a checklist. They are a system. An AI solution that is transparent but unaccountable provides the appearance of trust without the substance. One that is fair in its outputs but environmentally reckless externalizes harm onto communities that will never see its benefits.

Translating abstract ethical principles into concrete, lifecycle-stage-specific requirements — not merely affirming them at the organizational level — is the defining challenge in AI ethics today. The organizations that treat governance as an engineering constraint rather than a compliance exercise will be the ones that scale AI responsibly without incurring the reputational, regulatory, and operational costs that are becoming the predictable consequence of neglect.

The framework is clear. The gap is in execution.

This article draws on research from the NIST AI RMF, UNESCO Recommendation on the Ethics of AI, EU AI Act, Nature Sustainability, MIT, Cornell, and Springer AI Ethics journals. Data reflects findings published through early 2026.