The Missing Layer in Your AI Stack

Why the hardest problem in enterprise AI isn’t building agents — it’s proving they’re governed.

• • •

The technology part works. LLM providers are reliable. Agent frameworks handle orchestration. RAG pipelines retrieve context. The infrastructure is genuinely good. But in regulated industries — healthcare, financial services, life sciences, government — there’s a chasm between “technically functional” and “allowed to operate.” And the thing sitting in that chasm is governance.

Not governance as a buzzword. Governance as an engineering discipline: who decided this agent can approve transactions? What risk assessment was that based on? Which regulations apply? Where does a human review the agent’s work? What’s the audit trail?

In most organizations, the honest answer to those questions is: nobody decided formally, there was no risk assessment, the regulations haven’t been mapped, human review is ad hoc, and the audit trail is application logs.

This is the problem I’ve been working on. Not because governance is a trendy topic, but because it’s the actual blocker preventing AI agents from reaching production in the industries where they could do the most good.

The Numbers Are Stark

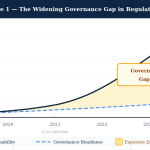

Before getting into the structural analysis, the data is worth looking at directly:

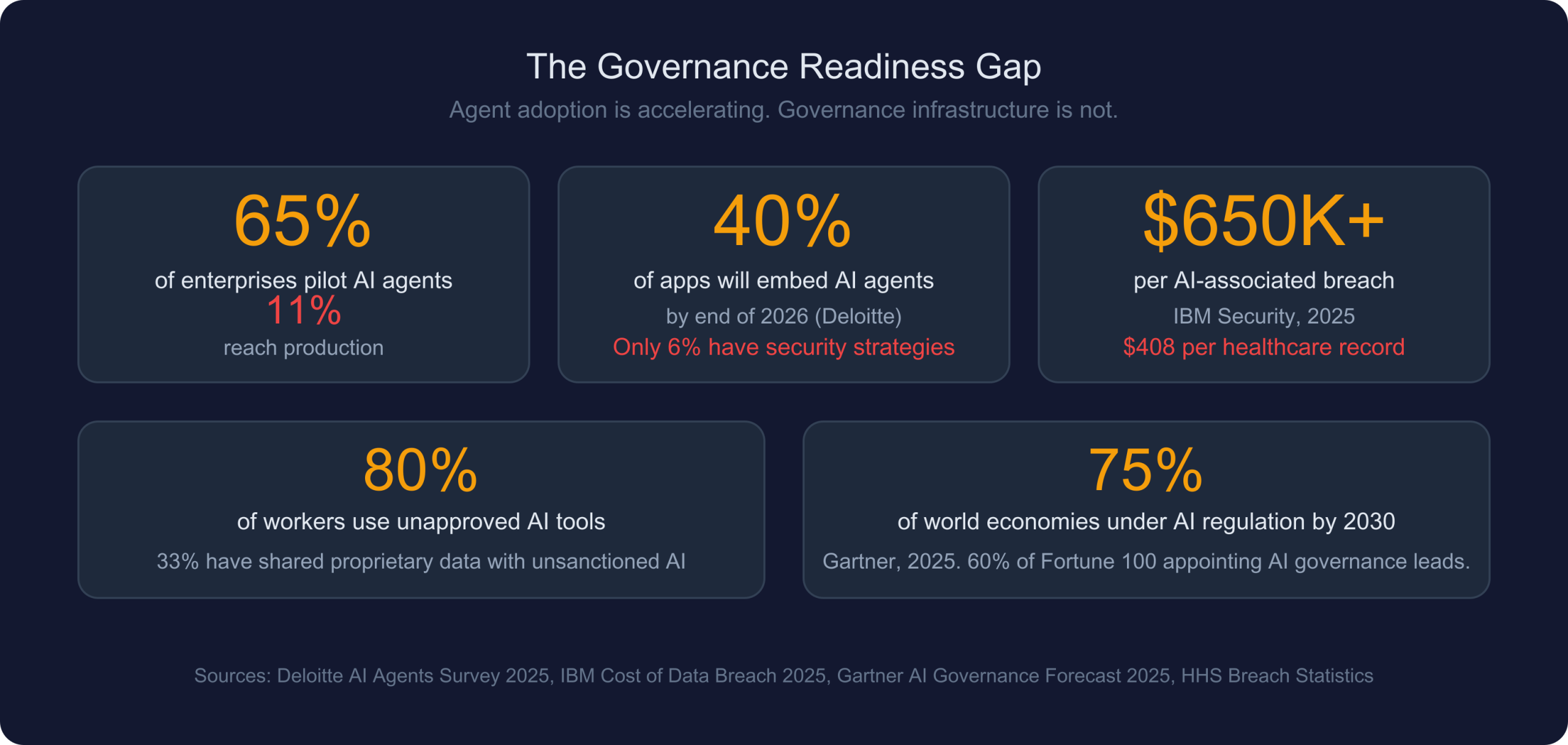

- 65% of enterprises are piloting AI agents. Only 11% reach production deployment. The number-one cited blocker is governance readiness — not technical capability, not cost, not talent.

- 40% of enterprise applications will embed autonomous AI agents by end of 2026 (Deloitte). Meanwhile, only 6% of organizations have advanced AI security strategies. That’s a 34-point gap between adoption intent and governance readiness.

- 80% of knowledge workers already use unapproved AI tools. 33% have shared proprietary data with unsanctioned AI systems. The agents are already here — they’re just ungoverned.

- AI-associated data breaches cost $650K+ per incident (IBM Security). Healthcare records cost $408 per breached record (HHS). The financial exposure is real and quantifiable.

- 75% of world economies will be covered by AI regulation by 2030 (Gartner). 60% of Fortune 100 companies plan to appoint a head of AI governance by end of 2026.

The adoption curve is steep. The governance curve is flat. Every quarter that gap widens, and the cost of closing it later increases.

Three Tool Categories, No Complete Solution

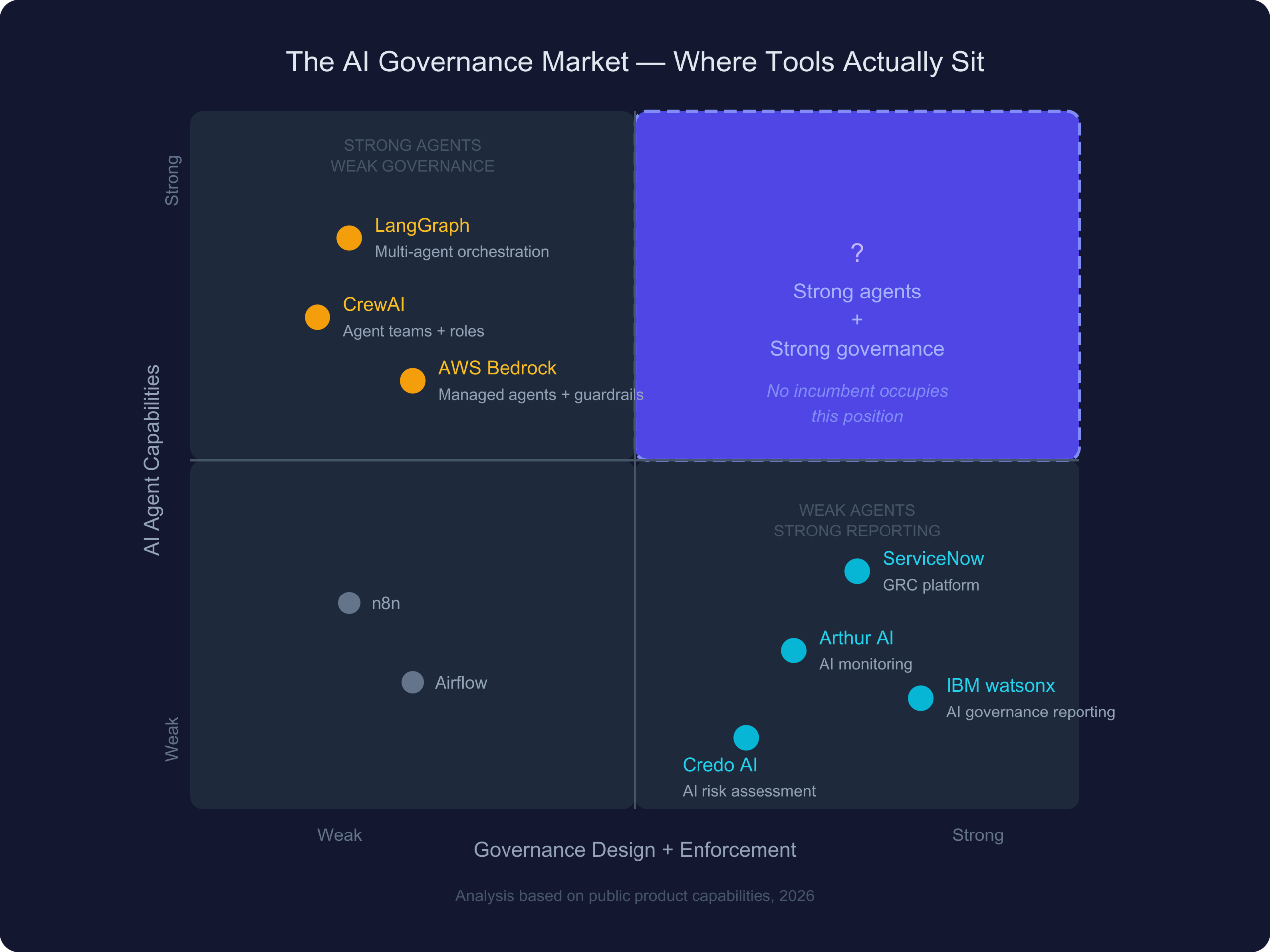

I’ve looked at this from every angle: enterprise GRC platforms, AI agent frameworks, AI monitoring tools, academic frameworks (NIST AI RMF, ISO 42001, EU AI Act technical standards), industry governance playbooks from every major cloud provider and AI lab. The pattern is consistent: three separate tool categories each solve part of the problem, but none solve the whole thing, and they don’t compose into a solution.

Enterprise GRC Platforms

MetricStream, ServiceNow GRC, AuditBoard, Archer — these platforms are essential for organizational risk management. They maintain risk registers, coordinate committee reviews, generate compliance reports, track audit findings. Enterprises with mature GRC practices have spent years and millions of dollars building this infrastructure.

But GRC platforms were designed around human-speed processes. Quarterly risk assessments. Annual audits. Policy approval workflows that take days or weeks. They operate at the cadence of committee meetings and compliance reports.

An AI agent processing insurance claims or assisting with clinical decisions operates at a fundamentally different timescale. Hundreds of micro-decisions per minute. Each one potentially consequential. Each one, in a regulated context, potentially subject to documentation requirements, regulatory constraints, and human oversight obligations.

GRC platforms can’t observe this. They have no concept of “agent autonomy level” or “human-in-the-loop gate placement” or “risk-derived capability constraints.” They document policies about AI. They don’t design or enforce the governance architecture that agents operate within.

AI Agent Frameworks

LangGraph, CrewAI, AWS Bedrock Agents, Google ADK, Microsoft Semantic Kernel — these are sophisticated tools. They handle multi-step reasoning, tool calling, memory, parallel execution, human-in-the-loop patterns. The quality of agent orchestration available today is genuinely impressive.

But governance in these frameworks is entirely the developer’s responsibility. There’s no built-in concept of risk-derived autonomy ceilings — the idea that an agent’s authority level should be computed from its risk profile, not chosen by a developer. No regulatory mapping. No structured HITL gates with SLA enforcement and escalation chains. No checksum-chained audit trails.

The typical pattern: build the agents, get them working, deploy to a test environment, start thinking about governance when compliance raises concerns. “Start thinking about governance” translates, in practice, to 6–12 months of retrofitting — adding review gates that require rearchitecting data flows, mapping regulations to controls that weren’t designed with those regulations in mind, building audit trails by instrumenting decision points that were never designed to be auditable.

I’ve talked to teams where the governance retrofit took longer than building the agents.

AI Monitoring and Risk Platforms

Arthur AI, IBM watsonx.governance, Credo AI, Holistic AI — these platforms observe deployed AI systems and report on bias, drift, fairness, explainability, and compliance gaps. They serve a real purpose: ongoing operational oversight of AI systems in production.

But they operate on a deployed system. They can detect that an agent’s outputs have drifted from baseline, or that a model exhibits bias. What they can’t do is design the governed workflow in the first place. They don’t produce the risk assessment, the HITL gate architecture, the regulatory mapping, or the audit-ready documentation that regulators actually ask for. They tell you what went wrong. They don’t create the architecture that prevents it.

The Empty Quadrant

Map these tool categories on two axes — AI agent execution capability and governance design/enforcement capability — and the picture is clear:

Agent frameworks cluster in the upper-left: strong agents, weak governance. GRC and monitoring tools cluster in the lower-right: strong governance reporting, no agent execution. The upper-right quadrant — strong agent capabilities combined with strong governance design and runtime enforcement — is empty.

That’s not a coincidence. It’s a genuinely hard combination to build. Governance-first agent design requires deep domain knowledge across multiple regulated industries, regulatory mapping across jurisdictions, and a fundamentally different architecture than capability-first agent builders provide.

But someone needs to build it. Because the current state — governed enterprise AI as a 12-month retrofit project — doesn’t scale.

What Goes Wrong Without a Governance Layer

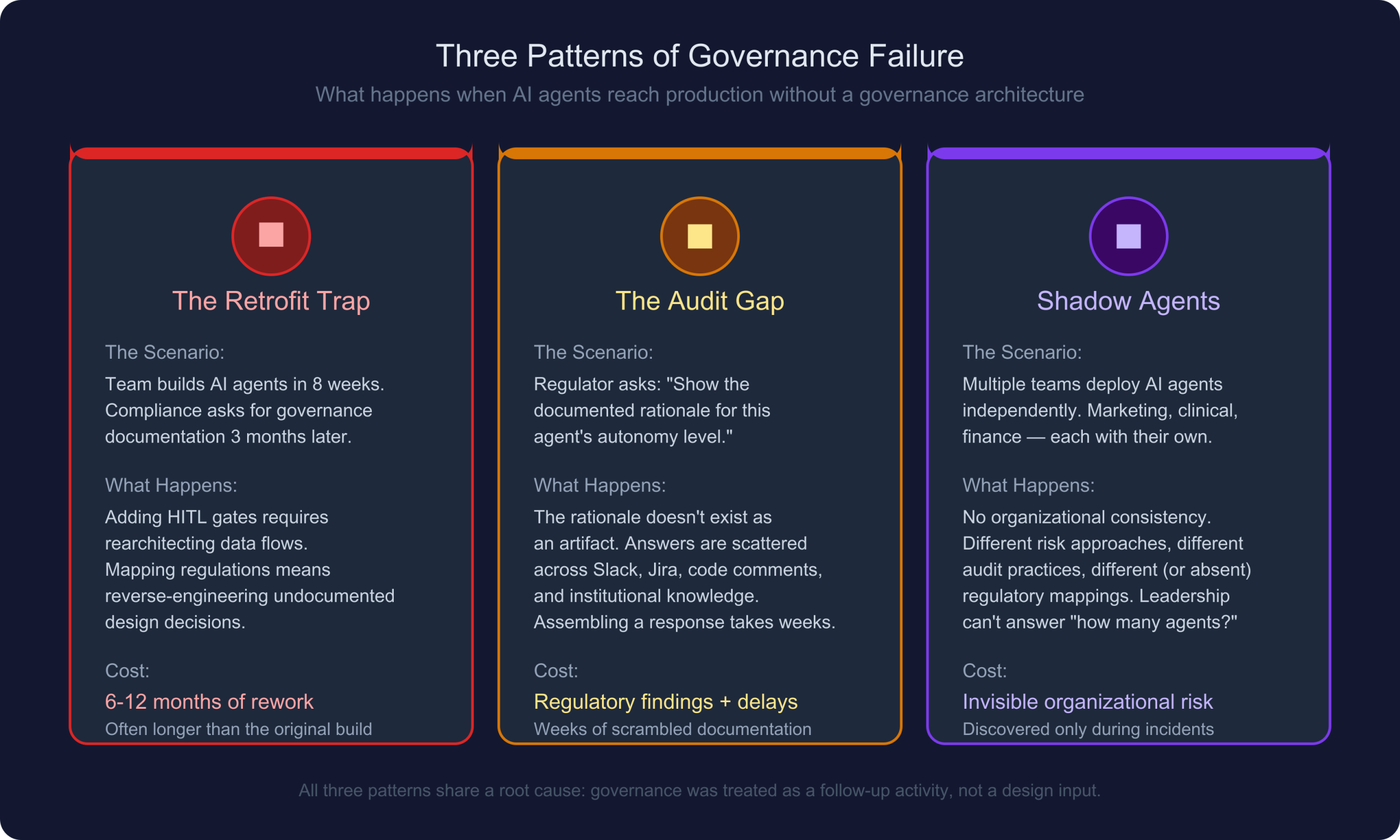

The consequences aren’t hypothetical. They’re playing out in organizations right now. Three patterns recur:

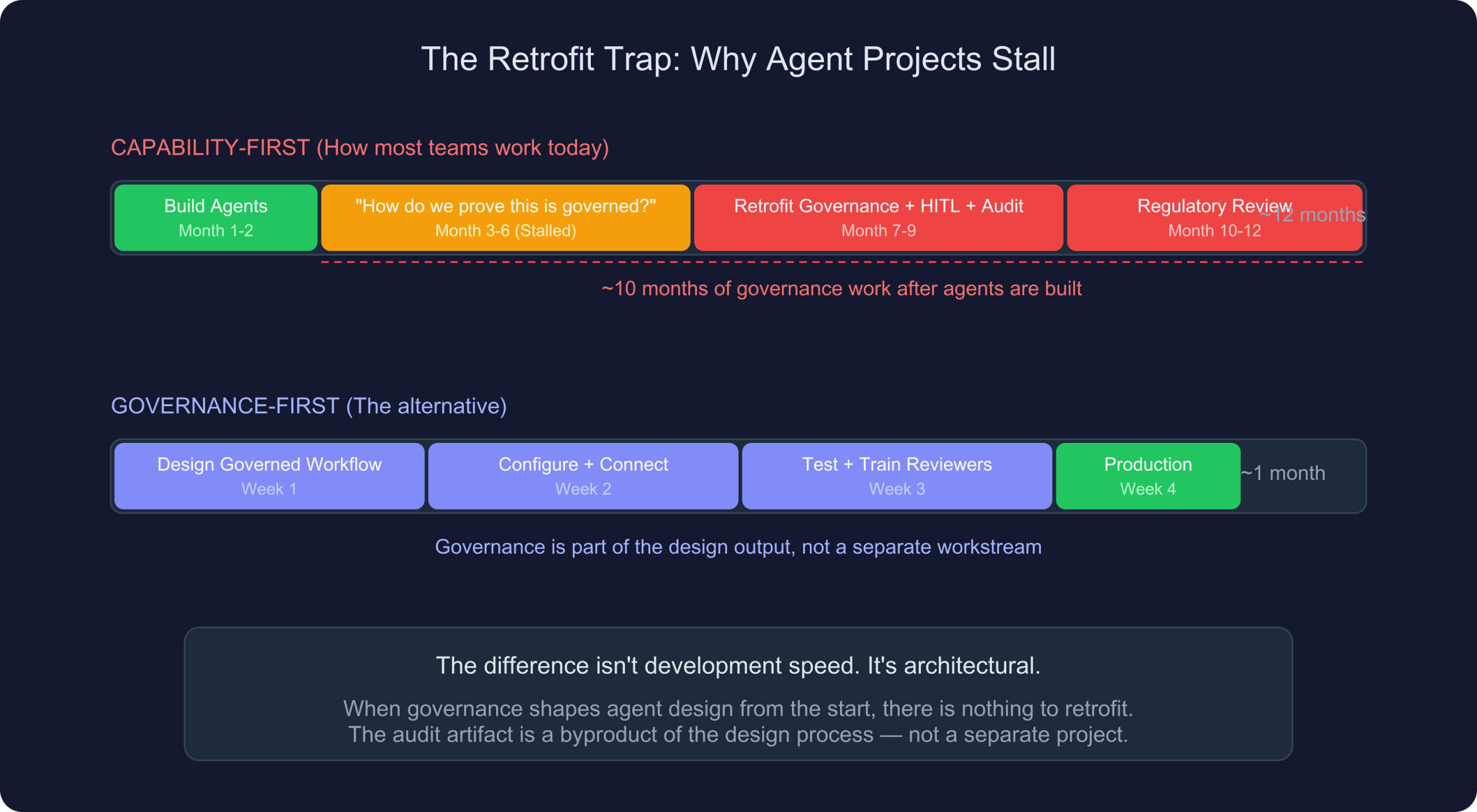

The Retrofit Trap

A financial services team builds an AI agent that processes loan applications. Technically excellent — accurate risk scoring, fast processing, good user experience. Ships to a test environment in 8 weeks.

Three months later, the compliance team asks reasonable questions: How does this agent decide which applications need human review? Where’s the regulatory mapping to BSA/AML and fair lending requirements? What’s the audit trail for adverse action decisions?

The retrofit begins. Adding human-in-the-loop review gates requires rearchitecting the data flow — the agent wasn’t designed with structured decision handoff points. Mapping to regulations means understanding design decisions that were made informally and never documented. Building an audit trail means instrumenting every decision point after the architecture was finalized.

Eight months of rework. Often longer than building the agent.

The root cause isn’t that the team was careless. It’s that no governance architecture existed before agent design began. The compliance questions are perfectly reasonable — but answering them requires changes so deep that they amount to a redesign.

The Audit Artifact Gap

A healthcare organization deploys an AI system that assists with prior authorization decisions. An OIG audit asks for documentation: what data does the agent access? What’s the documented rationale for its autonomy level? How are edge cases routed to human review? Which regulatory requirements were considered in its design?

The honest answer: the rationale for the agent’s authority level doesn’t exist as a formal artifact. It was decided implicitly — by whoever configured the system. The regulatory mapping was informal (“we thought about HIPAA”). The audit trail is application logs that weren’t designed for regulatory review.

Assembling a coherent response takes weeks. It still leaves gaps, because you can’t retroactively create a governance rationale that was never formalized.

Shadow Agents

Across regulated industries, individual teams are deploying AI agents without centralized governance. Marketing built one that accesses customer data. The clinical team has one that assists with treatment planning. Finance deployed one that generates reports from sensitive data.

Each team believes their agent is adequately governed because they added some guardrails. But there’s no organizational consistency — different risk assessment approaches (or none), different regulatory mappings (or none), different audit practices (or none).

When leadership asks “how many AI agents do we have, and are they all governed?” — nobody has a confident answer. The organization’s AI risk surface is unknown.

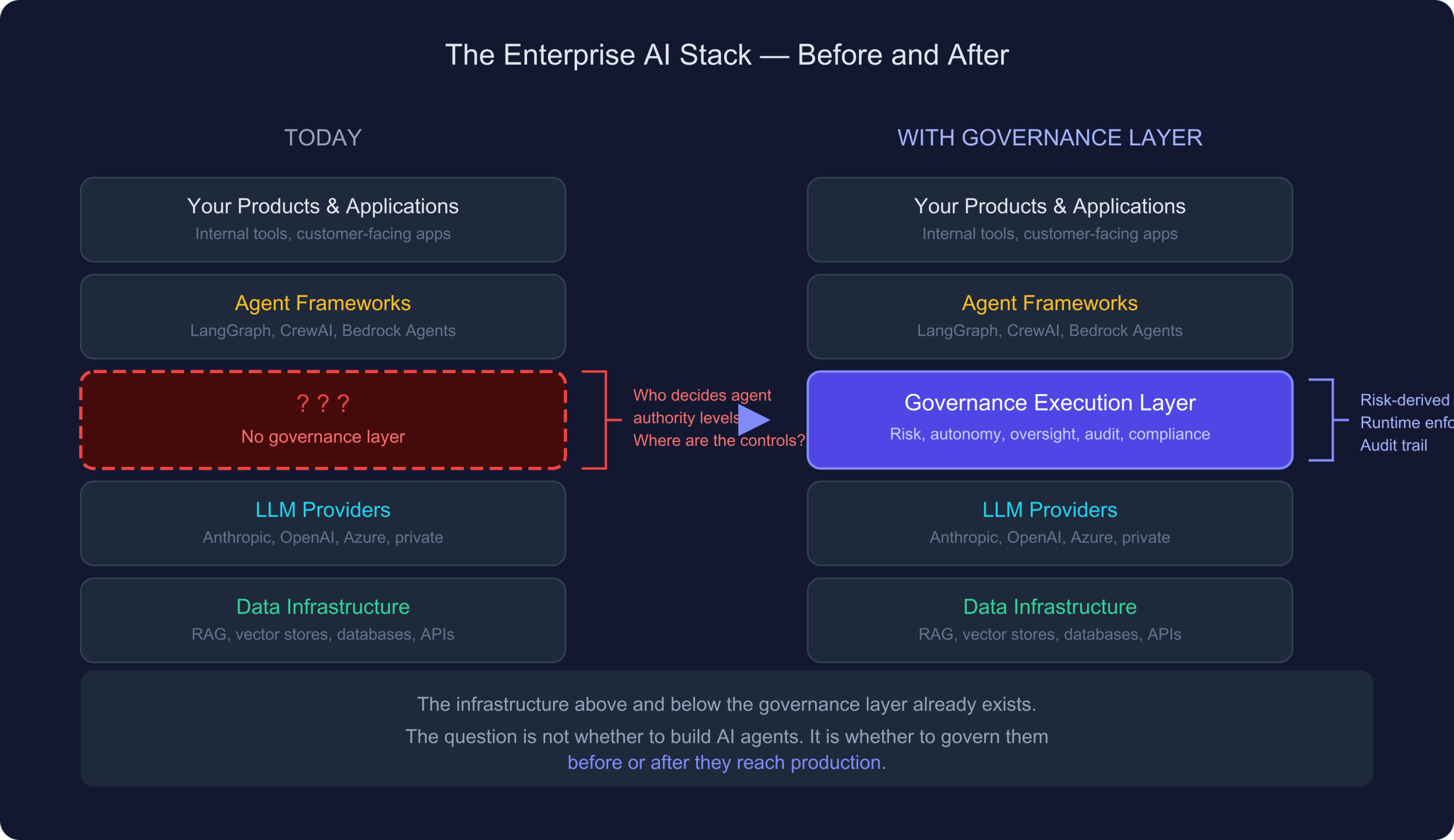

The Stack Is Missing a Layer

After studying this problem across industries, my conclusion is structural: the enterprise AI stack is missing a layer.

The layers above and below are well-served by existing tools. What’s missing is the layer between them — the one that answers: what is each agent allowed to do? Which regulations apply? Where must a human review? What’s the audit trail? How do we detect drift?

Today, the answer to all of these is “we’ll figure it out later” or “the developer handles it.” Neither scales.

What a Governance Execution Layer Should Look Like

I don’t think this is a monitoring problem (observe and report). I don’t think it’s a GRC problem (policy management at human speed). And I don’t think it’s a framework feature (add governance as a library to an existing agent framework). I think it’s a distinct layer with specific properties:

Governance before agents. The design sequence matters. If you build agents first and add governance later, governance is a constraint bolted onto a fixed architecture. If governance shapes the agent design from the start, the constraints are constitutive — they define what agents can do, not just what they can’t. A risk assessment should determine autonomy levels. Regulatory requirements should determine where human review is mandatory. The agent’s capabilities should be what’s left after governance boundaries are drawn.

Risk-derived, not developer-chosen. An agent’s authority level — how much it can do without human approval — should be derived from a structured risk assessment, not chosen by the developer who builds it. This makes the autonomy level auditable and traceable. When a regulator asks “why can this agent do X without human review?” — the answer is embedded in the design artifact.

Runtime enforcement, not just design-time. Design-time governance establishes what agents should do. Runtime enforcement ensures they actually do it. Agents should receive only the data they’re authorized to access. Tool calls should be checked against governance constraints at invocation time. Autonomy ceilings should be enforced per-action. Drift should trigger increased oversight automatically. Design-time governance that isn’t enforced at runtime is documentation, not governance.

Deterministic gates, not confidence-based. Human review points should be triggered by governance rules — risk levels, regulatory requirements, data sensitivity. Not by AI confidence scores. “The model is 95% confident” is not a governance criterion. Governance gates should be deterministic.

Regulatory awareness built in. Manually mapping each AI agent to each applicable regulation across each jurisdiction doesn’t scale. A governance layer should understand which regulations apply based on industry, geography, data types, and decision types — and derive the appropriate controls automatically.

Portable, not locked in. Governance shouldn’t be tied to a specific execution platform. The governance specification should travel with the workflow — whether you run it on your own infrastructure or export to a different platform entirely.

The Regulatory Moment

The timing matters because the regulatory landscape is converging on specific, enforceable requirements for AI systems.

EU AI Act

The EU AI Act is the most comprehensive AI regulation enacted to date. For high-risk AI systems — which includes most agentic AI in healthcare, financial services, HR, and critical infrastructure — the requirements are specific and technical:

| Article | Requirement | Practical Implication |

|---|---|---|

| Art 9 | Risk management process | Documented risk assessment throughout the lifecycle |

| Art 10 | Data governance | Data limited to what’s necessary — requires runtime filtering |

| Art 12 | Record-keeping | Tamper-resistant logging with model identification |

| Art 13 | Transparency | Interpretable outputs with structured decision records |

| Art 14 | Human oversight | Effective human control — ability to interrupt or override |

| Art 15 | Accuracy & robustness | Continuous monitoring for degradation |

These aren’t abstract principles. They’re engineering requirements that demand specific capabilities. Most enterprises deploying AI agents don’t have these capabilities today. Building them ad hoc, per agent, per team, is the exact scenario that leads to the retrofit trap.

US Regulatory Fragmentation

The US landscape is fragmented but accelerating. Federal frameworks (NIST AI RMF, NIST AI 600-1 for generative AI), sector-specific guidance (FDA for SaMD, OCC/FDIC for banking, SEC for financial AI), and expanding state legislation create a patchwork of compliance obligations that vary by geography, industry, and use case.

The challenge isn’t any single regulation. It’s that a healthcare organization operating in the US and EU faces HIPAA, state privacy laws, EU AI Act, and GDPR — simultaneously, for each AI agent. A financial institution serving global markets faces SOX, BSA/AML, GDPR, MiFID II, and emerging AI-specific regulations. The compliance matrix grows faster than any manual process can manage.

The Timeline Problem

This is maybe the most important practical observation. The time comparison isn’t about development speed — it’s about eliminating an entire phase of work.

When governance is a design input, the risk assessment, regulatory mapping, HITL architecture, and audit trail are outputs of the design process. There’s nothing to add later because it was part of the design from the beginning. One month versus twelve months. Not because the technology is different, but because the sequence is different.

Who Feels This Most

Healthcare. Clinical decision support, prior authorization, care coordination, patient safety. HIPAA. FDA SaMD guidance. State privacy laws. The cost of a governance failure isn’t just financial — it’s patient safety.

Financial Services. Transaction monitoring, credit decisions, claims processing, regulatory reporting. SOX. BSA/AML. Fair lending. SEC/FINRA. The compliance infrastructure is already heavy; ungoverned AI agents add unquantified risk.

Life Sciences. Clinical trials, pharmacovigilance, regulatory submissions, manufacturing quality. ICH guidelines. GxP requirements. The regulatory bar is among the highest of any industry.

Government. Benefits processing, regulatory enforcement, public safety. FedRAMP. FISMA. NIST frameworks. Accountability requirements are non-negotiable.

But it extends beyond traditional “regulated industries.” Any organization where AI agents make or influence consequential decisions — HR hiring, financial approvals, legal review, procurement authorization — faces the same structural problem: how do you prove your AI agents are governed?

The governance layer should design agent architectures, not just audit them. Risk assessment determines autonomy levels. Regulatory mapping determines oversight requirements. These constraints shape the agent design — capabilities are what’s left after governance boundaries are drawn.

Runtime enforcement is non-negotiable. Design-time governance that isn’t enforced during execution is documentation, not governance. Every tool call, every data access, every decision point needs enforcement at the moment it happens.

Human oversight should be structured, not ad hoc. The right human should see the right information at the right time to make an informed decision, with SLA tracking and escalation so that oversight doesn’t become a bottleneck.

Audit trails should be tamper-resistant and automatic. When a regulator asks what happened, the answer should be immediate, structured, and cryptographically verifiable. Not “let me pull the logs and assemble a narrative.”

Regulatory intelligence should be systematic. Regulations change. New ones emerge. A governance layer should know which regulations apply to which workflows, and surface changes that affect existing deployments.

The governance specification should be portable. Not locked to any one execution platform, LLM provider, or vendor. Your governance architecture should travel with your workflow.