Governed by Design- What Regulated Industries Must Demand Before the Age of Agentic AI

Over the years of deep engagement with professionals across regulated industries—through panel conversations, working sessions, and exchanges spanning multiple countries—one question has stayed with me longer than any other – how do we build AI systems that are accountable not because we checked a box at the end, but because accountability was there from the beginning? That question became a research obsession, shaping more than two years of late nights and weekends. Each conversation since has only sharpened how much it matters.

This article is an attempt to share some of that thinking—particularly for the professionals and leaders navigating what AI actually means for operations, workforce, and institutional trust in environments where the cost of getting it wrong is not measured in lost revenue alone.

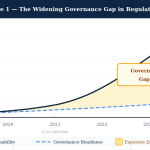

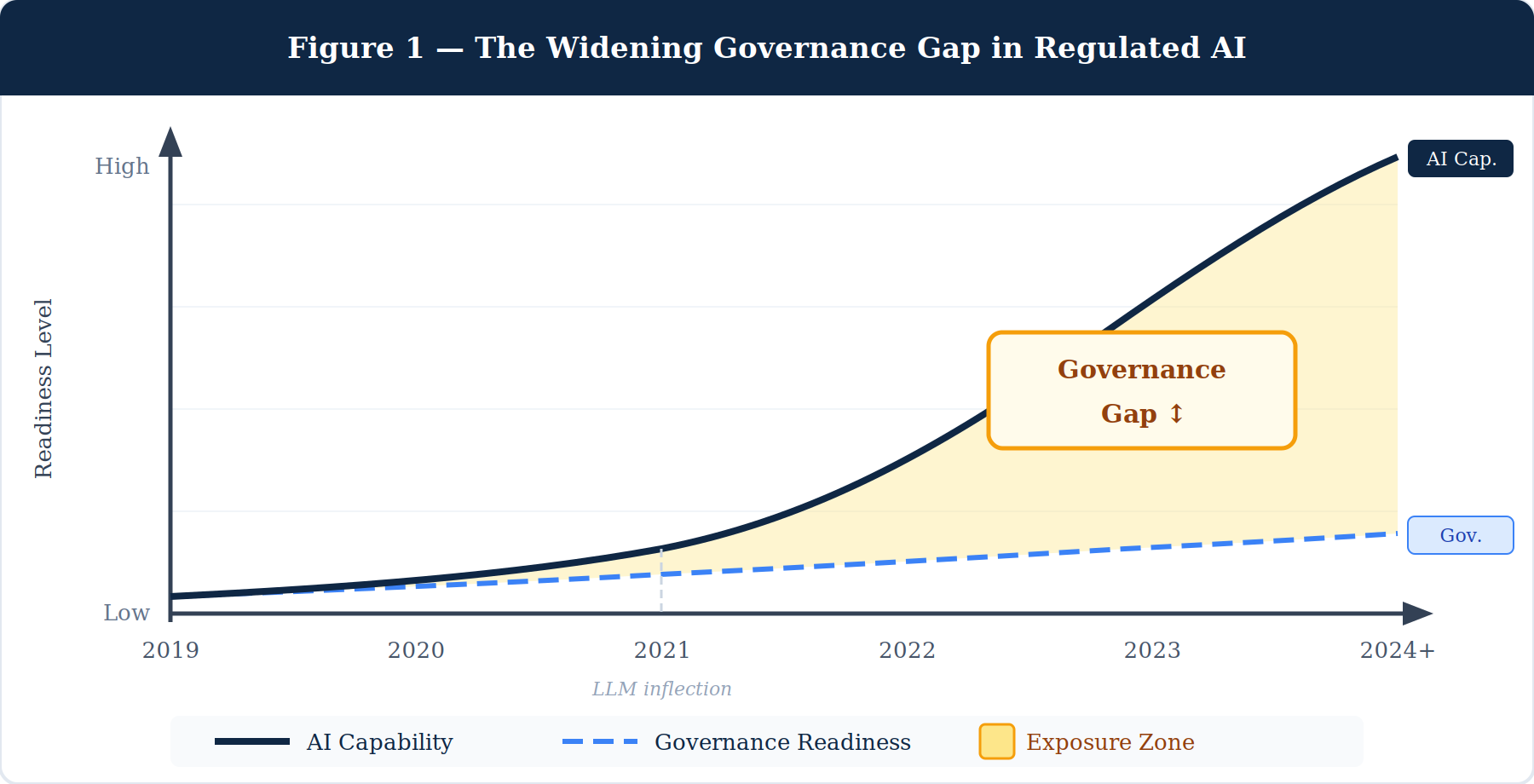

The Capability Gap Is Closing. The Governance Gap Is Not.

AI in regulated industries has reached an inflection point that most coverage gets slightly wrong. The dominant narrative frames AI as a capability story—what models can now predict, generate, classify, or automate. That narrative is real. But it obscures the more consequential challenge facing every organization in life sciences, healthcare, financial services, insurance, and energy that is trying to operationalize AI at scale.

The gap that matters most right now is not between what AI can do and what it cannot. It is between what AI can do and what institutions can safely govern.

The EU AI Act, which came into force in 2024, classifies a significant proportion of AI applications in high-stakes sectors as high-risk—imposing mandatory requirements around transparency, human oversight, technical documentation, and auditability that most existing systems were never built to satisfy.1 In financial services, regulators from the Basel Committee to the SEC have begun scrutinizing model governance frameworks, flagging that AI systems increasingly embedded in credit decisioning, trade surveillance, and compliance monitoring operate without the auditability that prudential regulation requires.2 In life sciences, the FDA has cleared over 950 AI-enabled medical devices as of early 2024, yet post-market surveillance frameworks for those tools remain underdeveloped relative to the deployment pace.3

The pattern is consistent across sectors and jurisdictions: capability arrived faster than governance infrastructure. That gap is not primarily a technology problem. It is a design problem.

Most AI systems in regulated industries were built to perform. Very few were built to account for themselves. Those are not the same thing, and the difference is starting to matter in ways organizations are unprepared for.

What “Agentic AI” Changes—and Why Regulated Industries Need to Pay Attention Now

For most of the past decade, AI systems deployed across regulated sectors were narrow and deterministic. A model scored credit risk. Another flagged a pharmacovigilance signal. A third detected a transaction anomaly. Each was a bounded tool producing a bounded output. A human reviewed that output and acted. The governance model was relatively straightforward: validate the model, monitor its outputs, keep a human in the loop for final decisions.

That architecture is changing. Rapidly.

Agentic AI refers to systems capable of planning, initiating multi-step actions, calling external tools and data sources, and adapting based on what they encounter—without step-by-step human instruction for each action. A single agentic workflow in financial services might retrieve a client record, assess regulatory suitability, cross-reference internal policy, generate a compliance memo, flag an exception, and route it for review—all within one orchestrated sequence. In life sciences, the equivalent might span signal detection, case narrative generation, regulatory threshold evaluation, and submission preparation. In insurance, it might run from first notice of loss through reserve setting, fraud scoring, and settlement recommendation.

The productivity implications are significant. A 2024 McKinsey Global Institute analysis estimated that generative AI could automate between 60 and 70 percent of current knowledge work activities across industries, with agentic capabilities substantially accelerating that timeline in compliance-intensive functions.4 A World Economic Forum report on the future of financial services found that agentic systems applied to regulatory reporting and compliance operations could reduce processing time by up to 50 percent in well-governed implementations.5

But here is what the efficiency projections consistently underweight: when AI moves from generating an output for a human to initiating a sequence of actions, the accountability question changes fundamentally. It is no longer sufficient to ask whether the model was accurate at the moment of training. You must ask: what does this system do when it encounters a regulatory edge case mid-workflow? What governs the depth and breadth of its autonomy? Who is accountable when a multi-step agent acts incorrectly, and at which step did the failure occur?

Regulated industries cannot afford to answer those questions after the fact.

Governance Cannot Be an Afterthought—It Has to Be a Design Principle

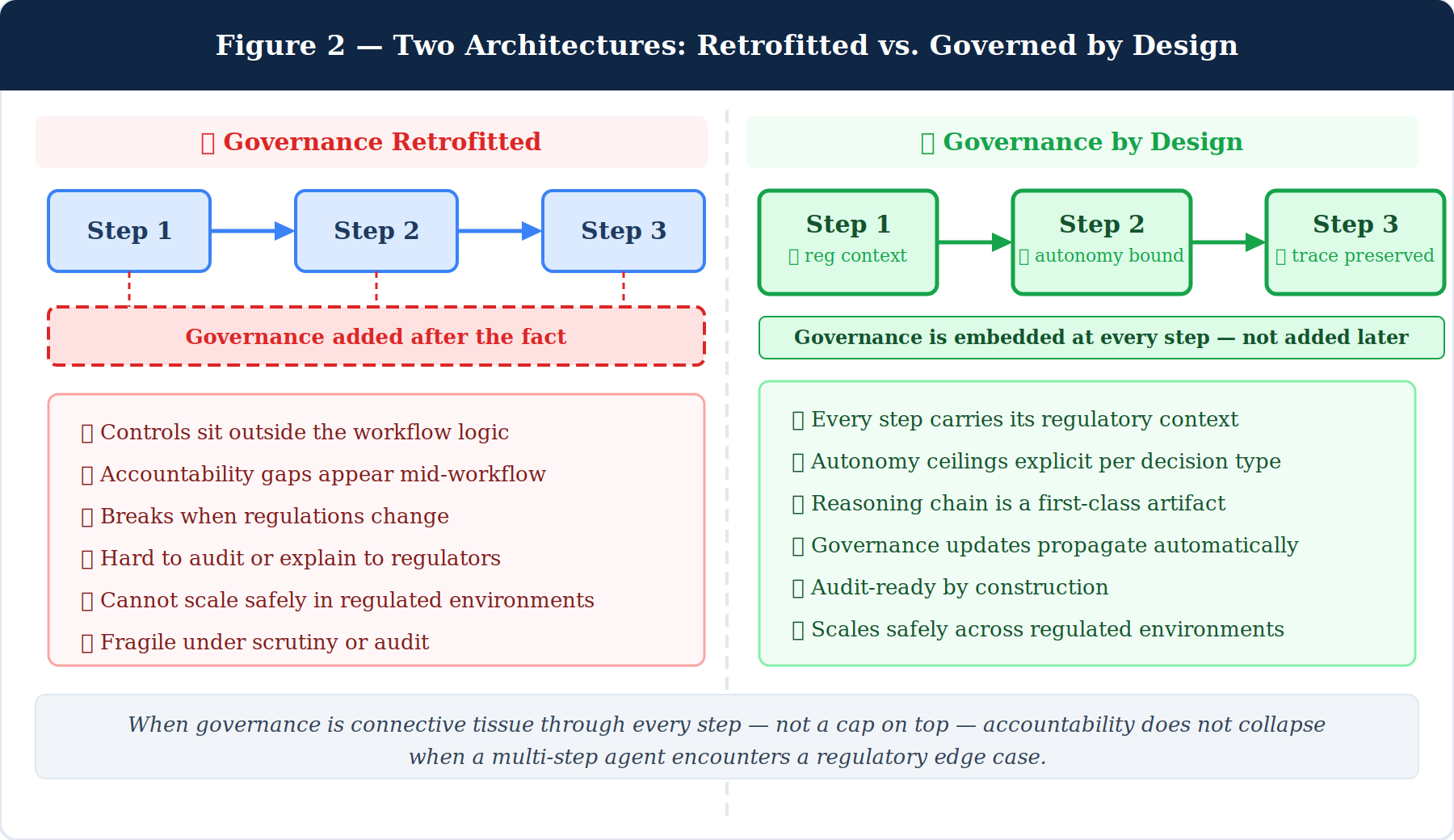

There is a phrase I have returned to repeatedly in both my research and my panel conversations: governance by design, not by audit.

In most enterprise AI deployments today, governance is retrofitted. A model or workflow is built for performance. Compliance and risk functions are brought in later to evaluate it against regulatory requirements, internal policies, and ethical standards. When gaps are found—and they usually are—controls are layered on top of a system that was never architected to carry them. The result is fragile: hard to audit, harder to explain, and nearly impossible to adapt as the regulatory landscape shifts.

What emerging research on trustworthy AI systems suggests instead is something more structurally demanding: that regulatory logic, governance constraints, and accountability mechanisms must be embedded into the architecture of AI workflows from the earliest design decisions, not appended at the end.6

This is particularly consequential in regulated industries, where the compliance surface is exceptionally complex and layered. A single operational workflow in a life sciences organization may need to simultaneously satisfy FDA 21 CFR Part 11 requirements, ICH E6 Good Clinical Practice standards, EMA pharmacovigilance guidelines, and internal SOX controls. A financial services workflow may need to reconcile MiFID II suitability obligations, internal credit policy, GDPR data residency requirements, and Basel III model risk constraints—all at once. An AI system that does not carry that regulatory context as part of its operating logic cannot be safely autonomous at meaningful scale.

What I have been exploring—and what has shaped much of my research over the past two years—is what it would take to build AI workflows in which governance logic is not a layer sitting above the workflow, but the connective tissue running through it. Systems where every step of an agentic sequence carries its regulatory context, where the depth of AI autonomy at any given decision point is bounded by what the governance framework explicitly permits, and where the reasoning chain is preserved not as an afterthought log, but as a first-class artifact of the workflow itself.

This is harder to build than a capable model. But it is the only architecture that can scale in regulated environments without catastrophic exposure.

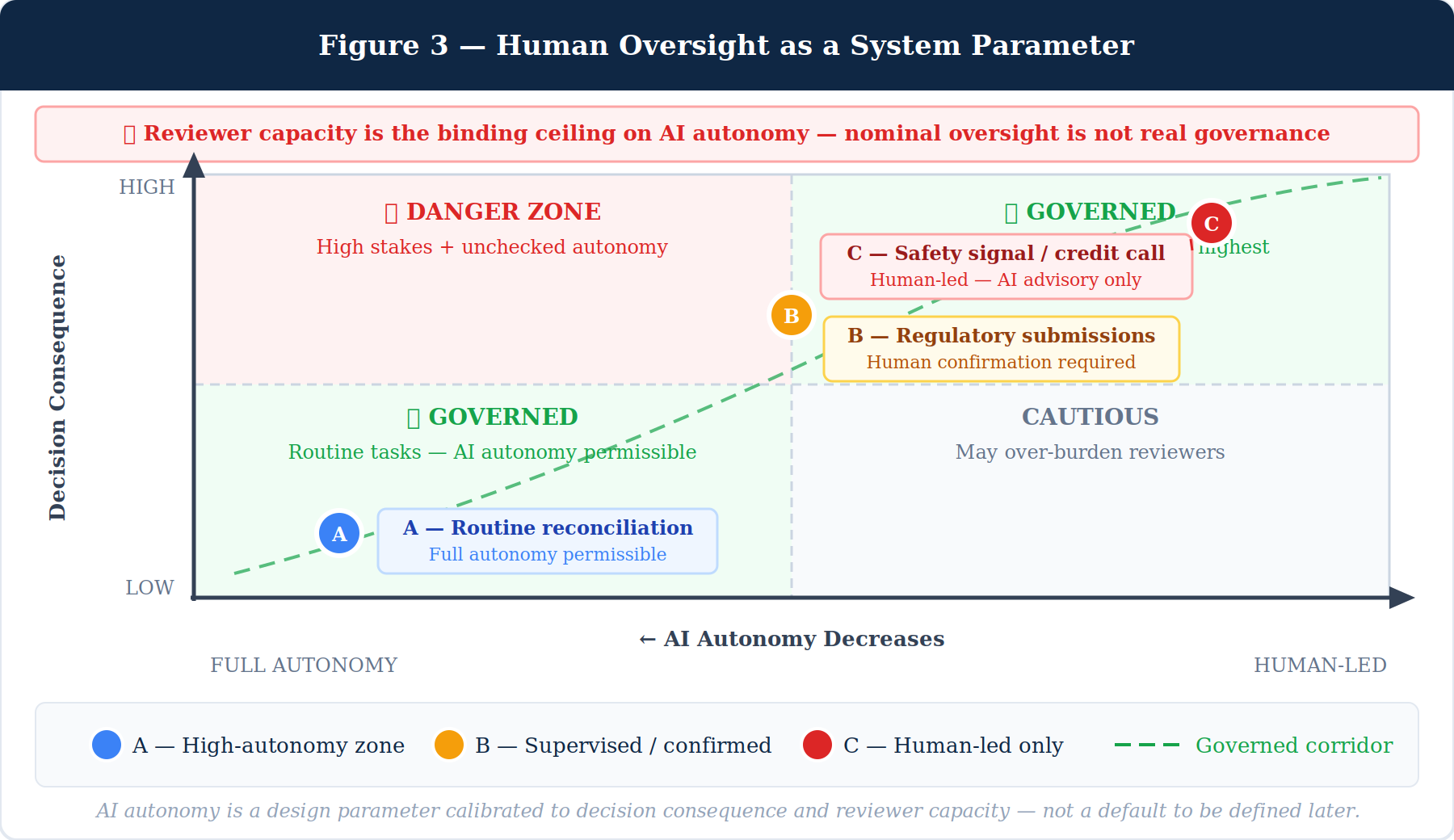

Human Oversight Is Not a Checkpoint. It Is a System Parameter.

One of the most important reframes in responsible AI design—and one that has come up repeatedly in conversations with compliance leaders, risk officers, and operational executives across sectors—is how we think about human oversight.

The conventional framing treats human oversight as a checkpoint: the AI does its work, a human reviews the output, and the process moves forward. That model made sense for narrow, single-output AI tools. It does not scale to agentic systems executing multi-step workflows, and it introduces a failure mode that is well-documented in high-stakes automation research: automation bias, where human reviewers systematically over-trust system outputs when volume is high and time is short.7

A more structurally sound approach treats human oversight as a system parameter—one that is calibrated based on the nature of the decision, the consequence of error, and the actual capacity of the humans responsible for review. The right level of AI autonomy for routine data reconciliation is not the same as the right level for a regulatory submission, which is not the same as for a credit decision affecting a customer’s financial access, or a safety signal determination that could trigger a product recall.

Research in human factors and safety-critical systems has long established this principle under different terminology—what aviation calls “levels of automation” and nuclear operations calls “defense in depth.”8 Applied to governed agentic AI in regulated industries, it means designing workflows where autonomy ceilings are explicit, where the system knows which decisions require human confirmation before proceeding, and where the volume and complexity of what is being surfaced for review is calibrated to what a human reviewer can meaningfully process.

This last point is underappreciated in most AI governance discussions. It is not enough to say “a human is in the loop.” If the loop requires a compliance analyst to review 300 AI-generated exception flags in a single shift, the human is technically present but functionally not governing anything. Meaningful oversight requires designing for reviewer capacity—not as an operational afterthought, but as a constraint that actively shapes how much the AI is permitted to act without confirmation.

Governance without capacity awareness is theater. The standard should be whether a human reviewer can meaningfully influence outcomes—not merely whether one is nominally present.

What This Means for the Workforce in Regulated Industries

Every substantive conversation I have been part of eventually arrives at the workforce question. It is the right question, and it deserves a more precise answer than the field has generally offered.

The concern most often expressed is displacement: that agentic AI will automate roles that currently employ significant portions of the workforce in compliance, operations, and back-office functions. That concern is not unfounded. A 2024 McKinsey Global Institute analysis found that roles centered on data collection, processing, and routine compliance documentation face the highest automation exposure across financial services, life sciences, and insurance—with agentic capabilities accelerating that timeline relative to earlier estimates.4

But the framing of displacement misses the more significant workforce transformation: the emergence of roles that do not yet exist at scale, for which regulated organizations are largely unprepared.

Governed agentic AI workflows require human professionals who can do something qualitatively different from what most current roles demand: not just use AI tools, but exercise judgment about AI tools. This includes the capacity to evaluate whether an AI-generated workflow specification correctly reflects the regulatory environment it claims to satisfy; to identify when an AI system is operating outside its intended governance boundaries; and to make meaningful decisions at the escalation points where agentic systems are designed to pause and wait for human input.

These are not skills that emerge from general AI literacy training alone. They require deep domain expertise—regulatory fluency, operational judgment, and institutional knowledge of risk—combined with a new layer of AI governance competency. The professionals who will anchor this transition are more likely to be experienced compliance officers, risk analysts, pharmacovigilance specialists, actuaries, and operational leads who develop AI governance as an added capability than technologists who attempt to develop regulated-domain knowledge from the outside.

Leadership teams thinking seriously about AI workforce strategy should be asking: which of our existing domain experts need to develop governed AI oversight as a core competency, and how do we build the organizational structures—the review boards, model risk committees, governance working groups, and escalation pathways—that those roles will inhabit?

What Leaders Need to Demand

If I were advising a Chief Risk Officer, Chief Compliance Officer, or Chief Digital Officer in a regulated organization on what to require of every agentic AI deployment moving forward, I would distill it to four things.

First: Regulatory traceability. Every AI workflow that touches a consequential operational, financial, or compliance decision must be able to demonstrate which regulatory requirements it was designed to satisfy, and how. Not a summary. A traceable linkage from each step in the workflow to the specific regulation, policy, or standard it reflects. If a vendor cannot provide this, the system is not audit-ready and should not be permitted to act autonomously.

Second: Explicit autonomy boundaries. AI systems should not be deployed with undefined autonomy. Every agentic workflow should specify, in advance, which decision types the system may resolve independently, which require human confirmation before proceeding, and under what conditions the workflow stops entirely and escalates. These boundaries should be documented, reviewed by compliance and risk functions, and revisited whenever the regulatory environment changes.

Third: Governance that updates when regulations do. Regulations change. Regulatory guidance evolves. Internal policies shift with business conditions. A system whose governance logic is hardcoded to last year’s regulatory landscape is a liability, not an asset. The architecture must support regulatory updates that propagate to the workflows they govern—not require a full rebuild every time the environment shifts.

Fourth: Meaningful human oversight, not nominal oversight. Every AI deployment should be accompanied by a clear answer to the question: what is the realistic capacity of the humans responsible for reviewing this system’s outputs, and does the system’s level of autonomy fit within that capacity? If the answer cannot be quantified, the governance is not real.

The Longer View

The conversations I have been part of over the past eighteen months—in panel discussions, working groups, and direct exchanges with professionals across regulated sectors and geographies—have reinforced something I believe deeply: the most important work in AI for regulated industries right now is not building more capable models. It is building the governance architecture that makes those models trustworthy enough to act consequentially in the environments where the stakes are highest.

That work is harder than the capability work. It requires expertise that crosses regulatory, domain, technical, and operational boundaries in ways that no single discipline has fully worked out. It requires humility about what AI systems can and cannot be trusted to do unsupervised. And it requires a willingness to design constraints into systems that most developers would prefer to leave unconstrained.

But it is the only path that leads to AI that professionals, customers, and regulators across these industries can actually trust. And trust, in the end, is the only foundation on which meaningful adoption can be built.

The age of agentic AI in regulated industries is not coming. It is here. The question is whether our governance thinking will be ready to meet it.

References

- European Parliament. “Regulation (EU) 2024/1689 — Artificial Intelligence Act.” Official Journal of the European Union, 2024.

- Basel Committee on Banking Supervision. “Principles for the sound management of operational risk: AI and machine learning considerations.” Bank for International Settlements, 2023.

- U.S. Food and Drug Administration. “Artificial Intelligence and Machine Learning (AI/ML)-Enabled Medical Devices.” FDA.gov, 2024 update.

- McKinsey Global Institute. “The economic potential of generative AI: The next productivity frontier.” McKinsey & Company, 2023.

- World Economic Forum. “AI in Financial Services: Navigating the Governance and Regulatory Landscape.” WEF, 2024.

- Floridi L, et al. “An ethical framework for a good AI society: Opportunities, risks, principles, and recommendations.” Minds and Machines, 2018.

- Parasuraman R, Manzey D. “Complacency and bias in human use of automation: An attentional integration.” Human Factors, 2010.

- Sheridan TB. “Supervisory control of remote manipulation, vehicles, and dynamic processes.” Advances in Man-Machine Systems Research, 1986.

- Brookings Institution. “Automation and AI: Workforce exposure in financial services, life sciences, and insurance.” Brookings, 2024.