AI/ML/GenAI & Data Products

Healthcare

Governed Agentic AI

Product Leadership

Most deterioration alerts in hospitals are loud, late, and lonely — loud because they pop up everywhere, late because they trigger when the patient is already in trouble, and lonely because they arrive without context, reasoning, or a next step. A better system does not shout harder. It notices earlier, prioritizes better, and hands the care team a small, executable plan. This article lays out how to design that system as a governed agentic AI workflow — and why governance is not a layer on top of the design, but the design itself.

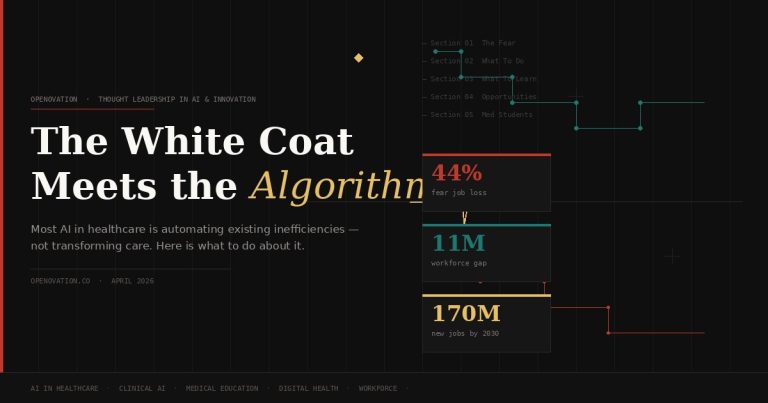

Why most “predict deterioration” products quietly fail

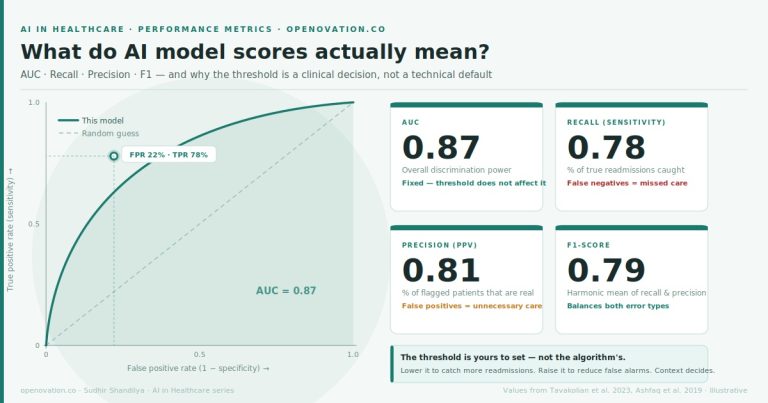

The use case is familiar – a hospitalized adult patient on a non-ICU ward who may be trending toward respiratory failure, sepsis, arrhythmia, or emergent ICU transfer. The predictive analytics literature is rich, and well-run pragmatic trials have shown that passively displaying risk trajectories on acute care wards can meaningfully change outcomes. The technology is not the hard part anymore.

The hard part is that most deployed deterioration products solve the wrong problem. They predict. They do not help. Clinicians are drowning in point alerts, status flags, and dashboards that compete with each other for attention. Adding another probability score to that pile does not save a life — it just adds a number.

The real product goal is not predict deterioration. It is:

- Notice earlier than human scanning of the chart would have.

- Prioritize better across a busy unit with scarce attention.

- Act faster with a short, tightly scoped set of next steps.

- Add less noise, not more.

That reframing is not cosmetic. It changes every architectural choice downstream — what the agents do, where they surface, who sees what, and how the system closes the loop when a clinician acts or overrides.

The end-to-end flow, without the vendor gloss

A well-designed system listens to data the hospital already produces — new vitals, lab results, nursing assessments, medication administrations, oxygen changes, rapid response documentation, and transfer orders. It does not need exotic signals. It needs to use ordinary signals exceptionally well, and continuously reassess as new data arrives.

Seven specialized agents, each with a narrow job, handle the journey from raw EHR event to clinical action and back into a learning loop. The diagram below is the full picture — we will unpack each agent after.

1. The Risk Synthesis Agent

The first agent computes a continuously updated deterioration risk score and a short-horizon trajectory — risk over the next 6 to 12 hours. Importantly, it produces a trajectory, not a static flag.

What it produces

- Current risk band: low, watch, high.

- Short-term trend: rising, stable, falling.

- Uncertainty and confidence indicator.

- Top contributing signals in clinician language — rising oxygen need, worsening creatinine, tachycardia, hypotension.

Adoption pattern

Do not lead with raw model math. Lead with a one-line clinical summary: “Risk rising over 6h; main drivers are increasing O2 need, RR, and lactate trend.” Raw probabilities live one click deeper, for the clinicians who want them.

2. The Workflow Routing Agent

Timing and recipient selection matter at least as much as prediction quality. The Workflow Routing Agent decides who should see what, and when, based on role and urgency.

- Nurse: early watch-state nudges tied to reassessment tasks.

- Covering physician: higher-confidence or persistent high-risk cases.

- Charge nurse: unit-level prioritization queue.

- Rapid response team: threshold-crossing escalations or unresolved high-risk cases.

Noise control is a product feature

- Suppress duplicate notifications for a defined cooling interval.

- Bundle low-severity issues into shift huddles or watchlists.

- Escalate only when risk is rising and actionable conditions are met.

3. The Clinical Reasoning Support Agent

This is the agent that earns trust. Instead of throwing a blunt “patient deteriorating” alert, it converts the risk signal into a structured, intermediate aid that matches how clinicians actually think.

- Suggested hypotheses: fluid overload, occult infection, arrhythmia, medication-related sedation, pulmonary process.

- Missing information that would reduce uncertainty: repeat vitals, ABG/VBG, lactate, chest X-ray, medication review, urine output check.

- Contradictory findings called out: “High risk but no recent oxygen documentation,” or “sedative increase may explain respiratory trend.”

This is the difference between an alert and a teammate. The agent helps the clinician answer the two questions they were already asking: What might be going on? and What should I check next?

4. The Action Bundle Agent

Scoped, executable next steps — ranked by impact and burden. The adoption literature is blunt about this: generic alerts fail, actionable micro-interventions succeed.

- Recheck vitals in 15 minutes.

- Order lactate and CBC.

- Review opioid or sedative administration in the past 4 hours.

- Consider bedside assessment by physician within 30 minutes.

- Pre-drafted rapid response criteria if trend worsens.

The UI is not a pop-up. It is one-click or one-tap actions embedded in the surfaces clinicians already use — nursing task list, physician order composer, rapid response workflow — with the reason visible next to each suggestion, and every pre-filled order editable before signing.

5. The Documentation and Communication Agent

Handoffs are where information dies. The Communication Agent prepares concise, role-specific summaries — a nurse-to-physician escalation note draft, a physician assessment snippet, a rapid response briefing card that captures why flagged, what changed, and what has already been done.

Two quiet benefits hide inside this agent. It saves documentation time, which is a universal clinician pain. And it produces traceable, auditable evidence of how the AI informed care — which matters enormously when governance, quality review, or (eventually) regulators come asking.

6 and 7. The escalation and closure loop

A system that only fires forward is incomplete. The system must close the loop by tracking whether recommended actions were completed, deferred, or overridden — and why.

- Acknowledged and acted on.

- Acknowledged and deferred.

- Overridden as not clinically appropriate.

- Escalated to higher level of care.

- Resolved because risk trend normalized.

Where each agent plugs into the EHR

A useful mental model: every routine EHR event is a potential agent trigger, and every trigger should resolve to a workflow-visible output rather than a separate dashboard.

| EHR event | Agent triggered | What gets surfaced | Workflow value |

|---|---|---|---|

| New vital signs charted | Risk Synthesis | Updated risk trend in patient header and watchlist | Continuous monitoring without manual polling |

| New lab result posted | Risk Synthesis + Reasoning | Revised drivers and suggested next checks | Converts data arrival into usable interpretation |

| Medication administered | Risk Synthesis + Reasoning | Context note, e.g. sedation-related risk contribution | Prevents decontextualized alerts |

| Nurse shift assessment complete | Routing + Action Bundle | Task recommendations in nursing workflow | Fits existing reassessment cadence |

| Physician opens chart | Communication + Reasoning | Concise risk summary, hypotheses, recommended actions | Supports decision-making at point of review |

| Transfer order or ICU consult | Communication | Escalation summary and rationale | Speeds safer transitions |

One signal, four surfaces

The Routing Agent’s job is to make the same underlying event feel tailored for each role. A rising-risk signal from Room 412 should not arrive identically on a nurse’s task list, a physician’s chart header, a charge nurse’s watchlist, and a rapid response team’s briefing card. Each role needs a different payload to act usefully in their workflow.

- Risk → reason → action chain. Never stop at a score. Always progress from risk signal to clinical interpretation to recommended next action.

- Role-specific views. Nurse, physician, charge nurse, and rapid response team each get a different payload from the same underlying event.

- Embedded, not bolted on. Surface outputs in census lists, chart headers, and order workflows — not a separate portal.

- Action bundles over generic alerts. A few high-value next steps with one-click execution outperform “risk is high” every time.

- Closed-loop learning. Capture acknowledgments, overrides, and outcomes so the system improves locally and governance teams can see whether it is helping or harming.

The part often poorly understood and implemented- governed agentic AI implementation

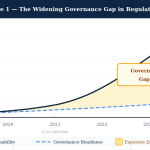

Everything above describes what the system does. The harder — and more consequential — design question is how you govern it in production. In regulated, high-stakes environments like hospitals, governance is not a compliance afterthought bolted on before launch. It is the architecture.

I think about this through PRAXIGOV™, the governance framework I have been developing and refining over the last few years of building agentic systems in regulated domains. It is the scaffolding I keep reaching for when the temptation is to ship the clever model and worry about governance later.

Three paths for autonomy, chosen per decision

Agentic AI is not a single autonomy dial. Different decisions in the same workflow deserve different levels of human control — and the routing of a decision to its correct path is itself a first-class design problem.

The single most important design decision in a governed agentic system is knowing which path each decision belongs on, and writing that down before you start building. Most failed clinical AI pilots blurred these boundaries and let the model creep into decisions it was never sanctioned for.

The deterministic compliance pipeline inside the agent stack

Within the “supervised-autonomous” and “assistive” paths, there is a further discipline that matters: the internal pipeline should try deterministic resolution before reaching for generative reasoning. In practice, I structure it as three stages — in strict order.

This matters because it inverts the usual architecture. Most teams start with “let the LLM handle it, and we’ll add guardrails.” A governed system starts with “deterministic by default, and only reach for the LLM when we must.” The LLM is a tool of last resort, not first response. In regulated settings, that inversion is the whole game.

Governance artifacts to build on day one

Capacity-aware throttling: the pattern almost nobody builds

Here is a design principle that surprised me the first time it fully clicked, and that I now consider non-negotiable in any agentic system deployed to human reviewers: throttle AI output based on human reviewer capacity, not model confidence alone.

A deterioration system running at full throttle can easily emit more “high-confidence” suggestions than a unit’s nursing staff can reasonably action. When that happens, adoption collapses — not because the model is wrong, but because the humans in the loop are saturated. The system needs to sense the reviewer’s current load and modulate what it surfaces, defer lower-priority nudges to the next shift huddle, and escalate only when genuinely necessary.

This is the principle behind the capacity-aware governance throttle patent I filed in late 2025 under NextBrightPath. The same principle applies far beyond healthcare — any agentic AI system that produces output faster than humans can meaningfully consume it is quietly building an adoption cliff.

Where the value actually lands

When a system like this is implemented thoughtfully — with agents that reason, governance that is architectural, and UI that embeds where clinicians already work — the value shows up on three levels:

- Clinical. Earlier recognition of deterioration, faster escalation, fewer missed worsening patients. The published experience with deployed AI-informed deterioration workflows has been meaningful where they were integrated with care, and absent where they were not.

- Operational. Better prioritization of scarce nursing and rapid response resources, less manual chart scanning, better shift handoffs. The operational win is quieter than the clinical one but often larger in aggregate.

- Adoption and trust. The AI feels like a teammate because it supports reasoning, proposes next steps, and preserves clinician authority. Adoption is not a marketing problem — it is a product design problem, and it is won or lost in the first 50 interactions a clinician has with the system.

Closing thought

If this resonated, you may also enjoy earlier OpenOvation articles on process mining in pharma operations, navigating the AI landscape by focusing on what matters, and the ML roadmap for AI product managers. The governance thinking here draws on PRAXIGOV™, a framework developed by me.